IEDM conference will be held December 3-7, 2022 at the Hilton San Francisco Union Square. Starting December 12, the full conference will be on-demand. The full technical program is available here:

https://www.ieee-iedm.org/s/program2022-webiste-rev-002-779a.pdf

There are a couple of sessions of potential interest to the image sensors community.

Session 37: ODI - Silicon Image Sensors and Photonics

Wednesday, December 7, 1:30 p.m.

37.1 Coherent Silicon Photonics for Imaging and Ranging (Invited), Ali Hajimiri, Aroutin Khachturian, Parham Khial, Reza Fatemi, California Institute of Technology

Silicon photonics platform and their potential for integration with CMOS electronics present novel opportunities in applications such as imaging, ranging, sensing, and displays. Here, we present ranging and imaging results for a coherent silicon-imaging system that uses a two-path quadrature (IQ) approach to overcome optical path length mismatches.

37.2 Near-Infrared Sensitivity Enhancement of Image Sensor by 2 ND -Order Plasmonic Diffraction and the Concept of Resonant-Chamber-Like Pixel, Nobukazu Teranishi, Takahito Yoshinaga, Kazuma Hashimoto, Atsushi Ono, Shizuoka University

We propose 2 nd -order plasmonic diffraction and the concept of a resonant-chamber-like pixel to enhance the near-infrared (NIR) sensitivity of Si image sensors. Optical requirements for deep trench isolation are explained. In the simulation, Si absorptance as high as 49% at 940 nm wavelength for 3.25-µm-thick Si is obtained.

37.3 A SPAD Depth Sensor Robust Against Ambient Light: The Importance of Pixel Scaling and Demonstration of a 2.5µm Pixel with 21.8% PDE at 940nm, S. Shimada, Y. Otake, S. Yoshida, Y. Jibiki, M. Fujii, S. Endo, R. Nakamura, H. Tsugawa, Y. Fujisaki, K. Yokochi, J. Iwase, K. Takabayashi*, H. Maeda*, K. Sugihara*, K. Yamamoto*, M. Ono*, K. Ishibashi*, S. Matsumoto, H. Hiyama, and T. Wakano, Sony Semiconductor Solutions, *Sony Semiconductor Manufacturing

This paper presents scaled-down SPAD pixels to prevent PDE degradation under high ambient light. This study is carried out on Back-Illuminated structures with 3.3, 3.0, and 2.5µm pixel pitches. Our new SPAD pixels can achieve PDE at ?=940nm of over 20% and a peak of over 75%, even 2.5µm pixel.

37.4 3-Tier BSI CIS with 3D Sequential & Hybrid Bonding Enabling a 1.4um pitch,106dB HDR Flicker Free Pixel, F. Guyader, P. Batude*, P. Malinge, E.Vire, J. Lacord*, J. Jourdon, J. Poulet, L. Gay, F. Ponthenier*, S. Joblot, A. Farcy, L. Brunet*, A. Albouy*, C. Theodorou**, M. Ribotta*, D. Bosch*, E. Ollier*, D.Muller, M.Neyens, D. Jeanjean, T.Ferrotti, E.Mortini, J.G. Mattei, A. Inard, R. Fillon, F. Lalanne, F. Roy, E. Josse, STMicroelectronics, *CEA-Leti, Univ. Grenoble Alpes, **Univ. Grenoble Alpes, Univ. Savoie Mont Blanc, CNRS, Grenoble INP, IMEP-LAHC

A 3-tier CIS combining 3D Sequential Integration for the 2-tier pixel realization & Hybrid Bonding for the logic circuitry connection is demonstrated. Thin film pixel transistors are built above photo-gate without

congestion. Dual carrier collection 3DSI pixel offers an attractive dynamic range (106dB, Single Exposure) versus pixel pitch (1,4µm) trade-off

37.5 3-Layer Stacked Voltage-Domain Global Shutter CMOS Image Sensor with 1.8µm-Pixel-Pitch, Seung-Sik Kim, Gwi-Deok Ryan Lee, Sang-Su Park, Heesung Shim, Dae-Hoon Kim, Minjun Choi, Sangyoon Kim, Gyunha Park, Seung-Jae Oh, Joosung Moon, Sungbong Park, Sol Yoon, Jihye Jeong, Sejin Park, Sanggwon Lee, HaeJung Lee, Wonoh Ryu, Taehyoung Kim, Doowon Kwon, Hyuk Soon Choi, Hongki Kim, Jonghyun Go, JinGyun Kim, Seunghyun Lim, HoonJoo Na, Jae-kyu Lee, Chang-Rok Moon, Jaihyuk Song, Samsung Electronics

We developed a 1.8µm-pixel GS sensor which is suitable for mobile applications. Pixel shrink was possible by the 3-layer stacking structure with pixel-level Cu-to-Cu bonding and high-capacity DRAM capacitors. As a result, excellent performances were achieved i.e. -130dB, 1.8e-rms and 14ke- of PLS, TN and FWC, respectively.

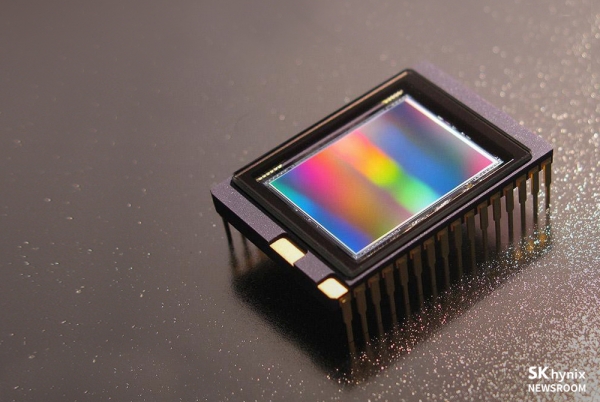

37.6 Advanced Color Filter Isolation Technolgy for Sub-Micron Pixel of CMOS Image Sensor, Hojin Bak, Horyeong Lee, Won-Jin Kim, Inho Choi, Hanjun Kim, Dongha Kim, Hanseung Lee, Sukman Han, Kyoung-In Lee, Youngwoong Do, Minsu Cho, Moung-Seok Baek, Kyungdo Kim, Wonje Park, Seong-Hun Kang, Sung-Joo Hong, Hoon-Sang Oh, and Changrock Song SK hynix Inc.

The novel color filter isolation technology, which adopts the air, the lowest refractive index material on the earth, as a major component of an optical grid for sub-micron pixels of CMOS image sensors, is presented. The image quality improvement was verified through the enhanced optical performance of the air-grid-assisted pixels.

37.7 A 140 dB Single-Exposure Dynamic-Range CMOS Image Sensor with In-Pixel DRAM Capacitor, Youngsun Oh, Jungwook Lim, Soeun Park, Dongsuk Yoo, Moosup Lim, Joonseok Park, Seojoo Kim, Minwook Jung, Sungkwan Kim, Junetaeg Lee, In-Gyu Baek, Kwangyul Ryu, Kyungmin Kim, Youngtae Jang, Min-SunKeel, Gyujin Bae, Seunghun Yoo, Youngkyun Jeong, Bumsuk Kim, Jungchak Ahn, Haechang Lee, Joonseo Yim, Samsung Electronics Co., Ltd.

A CMOS image sensor with a 2.1 µm pixel for automotive applications was developed. With a sub-pixel structure and a high-capacity DRAM capacitor, a single exposure dynamic range achieves 140 dB at 85, supporting LED flicker mitigation and blooming free. SNR stay above 23 dB at 105

Session 19: ODI - Photonic Technologies and Non-Visible Imaging

Tuesday, December 6, 2:15 p.m.

19.1 Record-low Loss Non-volatile Mid-infrared PCM Optical Phase Shifter based on Ge2Sb2Te 3S2, Y. Miyatake, K. Makino*, J. Tominaga*, N. Miyata*, T. Nakano*, M. Okano*, K. Toprasertpong, S. Takagi, M. Takenaka, The University of Tokyo, *National Institute of Advanced Industrial Science and Technology (AIST)

We propose a low-loss non-volatile PCM phase shifter operating at mid-infrared wavelengths using Ge 2Sb 2Te 3S2 (GSTS), a new selenium-free widegap PCM. The GSTS phase shifter exhibit the record-low optical loss for p phase shift of 0.29 dB/p, more than 20 times better than reported so far in terms of figure-of-merit.

19.2 Monolithic Integration of Top Si3N4-Waveguided Germanium Quantum-Dots Microdisk Light Emitters and PIN Photodetectors for On-chip Ultrafine Sensing, C-H Lin, P-Y Hong, B-J Lee, H. C. Lin, T. George, P-W Li, National Yang Ming Chiao Tung University

An ingenious combination of lithography and self-assembled growth has allowed accurate control over the geometric with high-temperature thermal stability. This significant fabrication advantage has opened up the 3D integration feasibility of top-SiN waveguided Ge photonics for on-chip ultrafine sensing and optical interconnect applications.

19.3 Colloidal quantum dot image sensors: a new vision for infrared (Invited), P. Malinowski, V. Pejovic*, E. Georgitzikis, JH Kim, I. Lieberman, N. Papadopoulos, M.J. Lim, L. Moreno Hagelsieb, N. Chandrasekaran, R. Puybaret, Y. Li, T. Verschooten, S. Thijs, D. Cheyns, P. Heremans*, J. Lee, imec,

*KULeuven

Short-wave infrared (SWIR) range carries information vital for augmented vision. Colloidal quantum dots (CQD) enable monolithic integration with small pixel pitch, large resolution and tunable cut-off wavelength, accompanied by radical cost reduction. In this paper, we describe the challenges to realize manufacturable CQD image sensors enabling new use cases.

19.4 Grating-resonance InGaAs narrowband photodetector for multispectral detection in NIR-SWIR region, J. Jang, J. Shim, J. Lim, G. C. Park*, J. Kim**, D-M Geum, S. Kim, Korea Advanced Institute of Science and Technology (KAIST), *Electronics and Telecommunications Research Institute (ETRI), **Korea Advanced Nano Fab Center (KANC)

We proposed grating-resonance narrowband photodetector for the wavelength selection functionality at the range of 1300~1700 nm. Based on parameters designed from the simulation, we fabricated an array of pixels to selectively detect different wavelengths. Our device showed great wavelength selectivity and tunability depending on grating design with a narrow FWHM.

19.5 Alleviating the Responsivity-Speed Dilemma of Photodetectors via Opposite Photogating Engineering with an Auxiliary Light Source beyond the Chip, Y. Zou, Y. Zeng, P. Tan, X. Zhao, X. Zhou, X. Hou, Z. Zhang, M. Ding, S. Yu, H. Huang, Q. He, X. Ma, G. Xu, Q. Hu, S. Long, University of Science and Technology of China

The dilemma between responsivity and speed limits the performance of photodetectors. Here, opposite photogating engineering was proposed to alleviate this dilemma via an auxiliary light source beyond the chip. Based on a WSe 2/Ga 2O3 JFET, a >103 times faster speed towards deep ultra-violet has been achieved with negligible sacrifice of responsivity.

19.6 Experimental Demonstration of the Small Pixel Effect in an Amorphous Photoconductor using a Monolithic Spectral Single Photon Counting Capable CMOS-Integrated Amorphous-Selenium Sensor, R. Mohammadi, P. M. Levine, K. S. Karim, University of Waterloo

We directly demonstrate, for the first time, the small pixel effect in an amorphous material, a-Se. The results are also the first demonstration of the transient response of a-Se monolithically combined with a CMOS, with and without SPE, and the first aSe/CMOS PHS results, offering a-Se/CMOS for photon counting applications.