PRNewswire:

SeeDevice announces a licensing agreement with MegaChips Corporation, a fabless LSI Company. The agreement allows MegaChips to integrate SeeDevice's Photon Assisted Tunneling - Photo Detector (PAT-PD) smart vision sensor into their products. So far,

Megachips does not have image sensor products in its portfolio.

"

This licensing agreement is a validation of our technology maturity and ability to serve a major partner and supplier like MegaChips. Our PAT-PD sensor not only outperforms existing image sensors, it helps create an entirely new category of photon sensing capability," said Hooman Dastghaib, CEO of SeeDevice. "

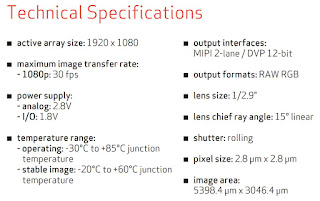

For example, sensitivity for photo-diodes is measured in uV per electron generated, or amps/watt (A/W), and generates a relatively low responsiveness of less than 1 A/W. Our PAT-PD sensor, using quantum tunneling, can produce a variable output between 102 and 108 A/W, far surpassing the ability of today's leading CMOS image sensors, producing higher-quality images in a wider variety of lighting conditions."

PAT-PD also expands the photosensitive light range of CMOS sensors beyond visible light into NIR spectrum (between 300nm-1,600nm) with plans to increase this to 2,000nm with the next-generation of sensors, pushing into SWIR band.

Additionally, the PAT-PD sensor boosts photoelectric conversion efficiency to 1e7, while maintaining a SNR over 60dB at room temperature. Reaction time is also reduced from microseconds to sub-nanoseconds while dynamic range is boosted to 100dB linear and 150dB non-linear.

SeeDevice claims to achieve all of these results using standard CMOS fabrication process, meaning easier integration in mixed-signal process, and avoiding the use of expensive exotic materials and manufacturing processes to achieve similar results.

Quantum tunneling effect allows a photon-activated current flow to trigger using a fraction of the photons normally required in a photo-diode based design. This allows a PAT-PD sensor to trigger with just a single photon, generating a current with unprecedented efficiency and creating a signal with significantly less input over a much wider range of wavelengths. Using a PAT-PD silicon-based CMOS image sensor, devices can capture granular-level sharp details even in extremely low light conditions by utilizing infrared, near infrared, and short-wave infrared frequencies.

There are a number of additional benefits PAT-PD provides to device makers:

- Global Shutter for CMOS Sensors

- Higher QE

- Higher Quality Low-Light Images

- Higher DR