PRNewswire: Facial recognition technology expands beyond human faces. Cargill and Cainthus, a Dublin-based machine vision company, are forming a strategic partnership that will bring facial recognition technology to dairy farms across the world.

Cainthus software uses images to identify individual animals based on hide patterns and facial recognition, and tracks key data such as food and water intake, heat detection and behavior patterns. The software then delivers analytics that drive on-farm decisions that can impact milk production, reproduction management and overall animal health.

Cargill and Cainthus intend to first focus on the global dairy segment, but will expand to other species, including swine, poultry and aqua over the next several months.

Wednesday, January 31, 2018

Lens Flare Limitation of DR

Imatest CTO & Founder Norman Koren discusses rarely mentioned issue of lens flare that often limits a system DR of the imaging system in his presentation at SPIE EI 2018 on January 29:

"The dynamic range of recent HDR image sensors, defined as the range of exposure between saturation and 0 dB SNR, can be extremely high: 120 dB or more. But the dynamic range of real imaging systems is limited by veiling glare (flare light), arising from reflections inside the lens, and hence rarely approaches this level."

"The dynamic range of recent HDR image sensors, defined as the range of exposure between saturation and 0 dB SNR, can be extremely high: 120 dB or more. But the dynamic range of real imaging systems is limited by veiling glare (flare light), arising from reflections inside the lens, and hence rarely approaches this level."

Vision Research Unveils 4MP 6,600fps Camera

Vision Research introduces the Phantom v2640, said to be the fastest 4MP camera available. It features a proprietary 4MP CMOS image sensor that delivers up to 26 Gpx/sec, while reaching 6,600fps at full 2048 x 1952 resolution, and 11,750fps at 1920 x 1080.

The v2640 features HDR of 64dB and the lowest noise floor of any Phantom camera (7.2 e-). It also has ISO of 16,000D for monochrome cameras and 3,200D for color cameras.

“In designing this new, cutting-edge sensor, we focused on capturing the best image in addition to meeting the speed and sensitivity requirements of the market. The 4-Mpx design significantly increases the information contained in an image allowing researchers to better understand and quantify the phenomena they are observing,” says Jay Stepleton, VP and GM of Vision Research.

The v2640 has multiple operating modes for increased flexibility. Standard mode uses CDS for the clearest image, while high-speed (HS) mode provides 34% higher throughput to achieve 6,600 fps. Monochrome cameras can incorporate “binning,” which converts the v2640 into a 1-Mpx camera that can reach 25,030fps with very high sensitivity.

Key Specifications of the Phantom v2640:

The v2640 features HDR of 64dB and the lowest noise floor of any Phantom camera (7.2 e-). It also has ISO of 16,000D for monochrome cameras and 3,200D for color cameras.

“In designing this new, cutting-edge sensor, we focused on capturing the best image in addition to meeting the speed and sensitivity requirements of the market. The 4-Mpx design significantly increases the information contained in an image allowing researchers to better understand and quantify the phenomena they are observing,” says Jay Stepleton, VP and GM of Vision Research.

The v2640 has multiple operating modes for increased flexibility. Standard mode uses CDS for the clearest image, while high-speed (HS) mode provides 34% higher throughput to achieve 6,600 fps. Monochrome cameras can incorporate “binning,” which converts the v2640 into a 1-Mpx camera that can reach 25,030fps with very high sensitivity.

Key Specifications of the Phantom v2640:

- 4MP sensor (2048 x 1952), 26Gpx/sec throughput

- Dynamic range: 64 dB

- Noise level: 7.2 e-

- ISO measurement: 16,000D (Mono), 3,200D (Color)

- 1 µs minimum exposure standard, 499ns / 142ns minimum exposure with export-controlled FAST option

- 4 available modes: Standard, HS and Binning (in Standard and HS)

- Standard modes feature CDS performed directly on the sensor to provide the lowest noise possible

Tuesday, January 30, 2018

Analyst Predicts Sony Image Sensor Business Slowdown

Bloomberg: Sony image sensor business is likely to weaken amid lower sales of Apple iPhones, an analyst at JPMorgan Chase & Co. wrote as he downgraded the company. IPhone X production will probably fall 50% QoQ and the weakness is likely to continue for the first half of the year as demand for high-end smartphones plateaus, according to J.J. Park. Sony gets half of its image sensor revenue from Apple, Park wrote. He also said the trend for adopting dual cameras is not as strong as first believed, including among Chinese phone makers, which will further hit Sony’s sales.

In Sony’s latest quarter, image sensors accounted for 9.4% of revenue and 22% of operating profit:

Sony is said to have a 70% market share for high-end image sensors in smartphones. “Despite a correction in Apple supply chain names, Sony has massively outperformed its global peers thanks to the structural trend of dual-cam,” Park.

In Sony’s latest quarter, image sensors accounted for 9.4% of revenue and 22% of operating profit:

Sony is said to have a 70% market share for high-end image sensors in smartphones. “Despite a correction in Apple supply chain names, Sony has massively outperformed its global peers thanks to the structural trend of dual-cam,” Park.

Samsung IntelligentScan Combines Iris and Face Recognition

XDA Developers: According to an APK teardown of the most recent Settings app from the latest unreleased Android Oreo beta, there appears to be a new feature in works for the upcoming Samsung Galaxy S9 and Galaxy S9+. Called Intelligent Scan, the feature reportedly “combines face and iris scanning to improve accuracy and security even in low or very bright light.” Here is a piece of the code:

Innovatrics and NIT Present Face and Fingerprint ID Solutions

Innovatrics partnersh New Imaging Technologies (NIT) to build a turnkey solutions, hardware, and software for face recognition and fingerprint matching.

The first solution, coming in Q2 2018, will address mainly the face recognition area, where the hardware part will be based on HDR sensors from NIT, overcoming the current limitation of conventional face recognition systems in dealing with light variations in outdoor conditions. IFace, the latest facial biometric software solution from Innovatrics, will be combined with this hardware providing a plug and play system for OEM or evaluation purpose. IFace has been previously integrated into several AFIS (Automatic Fingerprint Identification System) deployments and performs astonishing accuracy and high overall matching speed, thanks to a deep-neural networks core.

This joint development will include a state of the art 3D anti-spoofing feature, recently popularized by consumer devices.

“We are proud to co-work with an industry leader in biometrics technology. We have recently seen a rise of market demands, both on the consumer and professional side, for robust face recognition solutions. Most of the time, the identification failure is caused by image quality degradation. Images are lacking useful information, especially under uncontrolled lighting situations as it can occur in a day to day life. Having a combination of our HDR sensors and fast-accurate algorithms will be a clear answer to these situations,” says Yang Ni, NIT Founder and CTO.

The second focus of this partnership will be set on the fingerprint sensors and matching algorithms. The patented optical fingerprint sensor design from NIT, including under-glass model, will be integrated with Innovatrics matching algorithm, which was ranked first in accuracy in the recently published results of the Proprietary Fingerprint Template Evaluation II (PFT II). The first fully integrated standalone platform, including hardware & software, will be available around Q3 / Q4 2018.

The first solution, coming in Q2 2018, will address mainly the face recognition area, where the hardware part will be based on HDR sensors from NIT, overcoming the current limitation of conventional face recognition systems in dealing with light variations in outdoor conditions. IFace, the latest facial biometric software solution from Innovatrics, will be combined with this hardware providing a plug and play system for OEM or evaluation purpose. IFace has been previously integrated into several AFIS (Automatic Fingerprint Identification System) deployments and performs astonishing accuracy and high overall matching speed, thanks to a deep-neural networks core.

This joint development will include a state of the art 3D anti-spoofing feature, recently popularized by consumer devices.

“We are proud to co-work with an industry leader in biometrics technology. We have recently seen a rise of market demands, both on the consumer and professional side, for robust face recognition solutions. Most of the time, the identification failure is caused by image quality degradation. Images are lacking useful information, especially under uncontrolled lighting situations as it can occur in a day to day life. Having a combination of our HDR sensors and fast-accurate algorithms will be a clear answer to these situations,” says Yang Ni, NIT Founder and CTO.

The second focus of this partnership will be set on the fingerprint sensors and matching algorithms. The patented optical fingerprint sensor design from NIT, including under-glass model, will be integrated with Innovatrics matching algorithm, which was ranked first in accuracy in the recently published results of the Proprietary Fingerprint Template Evaluation II (PFT II). The first fully integrated standalone platform, including hardware & software, will be available around Q3 / Q4 2018.

Monday, January 29, 2018

LIPS Presents 3D Scanning for Smartphones

LIPS announces its "3D scanning and true 3D facial recognition solutions for Android mobile platforms. The solution aims squarely on providing iPhoneX face ID like unlock feature for Android platform as well as mobile 3D scanning.

It not only performs general 3D scanning but also 3D facial recognition through active IR laser projection over the scanned object and the two IR sensors that compute depth data for 3D reconstruction.

LIPS is targeting Q2 for an even smaller and thinner module that can potentially be integrated into phone’s internal modules."

It not only performs general 3D scanning but also 3D facial recognition through active IR laser projection over the scanned object and the two IR sensors that compute depth data for 3D reconstruction.

LIPS is targeting Q2 for an even smaller and thinner module that can potentially be integrated into phone’s internal modules."

Lightfield Imaging in Automotive Applications

AutoSens publishes a talk "Plenoptics: the ultimate imaging science and its application to automotive" by Atanas Gotchev, Centre for Immersive Visual Technologies (CIVIT), Tampere University of Technology, Finland:

SmartSens Announces High Sensitivity SmartPixel Brand

PRNewswire: SmartSens launches a new CMOS Sensor for clarity of low light photography, the SC4236. The SC4236 is 1/2.6-inch 4.5MP CMOS sensor in the SmartPixel series with pixel size of 2.5μm x 2.5μm and maximum frame rate of 30fps at full resolution. Based on the big pixel and advanced circuits, the SC4236 has excellent low-light performance with sensitivity reaching 3000mV/Lux-s, and its SNR1s performance is 0.39Lux. The SC4236 can adapt to the temperature ranging from -30ºC - 85ºC, compliant with industrial applications.

The SC4236 aimed to security and surveillance systems, Panoramic cameras, car DVRs, video conferences and so on.

The SC4236 is compatible with a wide range of ISPs, including HiSilicon Hi3516V300/Hi3516D, Novatek NT98515, Ingenic T30, MStar 313E, Nextchip NVP2475 and Fullhan FH8538. Platform docking with more manufacturers will be carried out next. The SC4236 is expected to go into mass production in January 2018.

By now, SmartSens has a wide range of security products:

The SC4236 aimed to security and surveillance systems, Panoramic cameras, car DVRs, video conferences and so on.

The SC4236 is compatible with a wide range of ISPs, including HiSilicon Hi3516V300/Hi3516D, Novatek NT98515, Ingenic T30, MStar 313E, Nextchip NVP2475 and Fullhan FH8538. Platform docking with more manufacturers will be carried out next. The SC4236 is expected to go into mass production in January 2018.

By now, SmartSens has a wide range of security products:

Himax Acquires 3D Nano Mastering Know-How

GlobeNewsWire: Himax acquires "certain advanced nano 3D masters manufacturing assets and related intellectual property and business from a US-based technology company. The transaction is expected to be closed in February 2018." Terms of the agreement and the name of the "US-based technology company" were not disclosed.

The advanced nano 3D manufacturing masters are primarily used in imprinting or stamping replication process to fabricate devices such as diffractive optical element (DOE), diffuser, collimator lens and micro lens array. The acquisition brings Himax the master tooling capability to supplement the company’s wafer level optics (WLO) technology, which is critical in its efforts to offer 3D sensing total solutions. In addition, certain intellectual properties such as those related to true grey scale image and micro lens arrays will enable Himax to enter into new markets such as biomedical, computational camera and niche displays, and develop more sophisticated DOE and diffuser for future generation 3D sensing solutions.

Himax IGI Precision Ltd. (“Himax IGI”), a wholly owned subsidiary, has been established for the said acquisition. Himax IGI, located in Minneapolis, Minnesota, will continue to invest in the development of state-of-the-art nano 3D mastering technology and solutions.

Jordan Wu, President and CEO of Himax, said, “The team that are joining us along with the acquisition are true experts in what they do and are arguably the best talents in the world in the small but very technically challenging field of nano 3D mastering. We sometimes got frustrated by the less than satisfactory result in our WLO product development, unsure whether the issue was caused by the quality of the master or our own product design or manufacturing. By adding mastering know-how into our family, we can surely enhance the overall WLO product quality and shorten our development cycle. I am sure many of our innovation driven customers will be thrilled to hear that finally someone has taken the right move to bring nano 3D mastering, design and manufacturing of high precision optical structure product all under the same roof.”

Update: As written in comments, the company that sold the mastering technology to Himax is probably IGI, located in Minneapolis.

The advanced nano 3D manufacturing masters are primarily used in imprinting or stamping replication process to fabricate devices such as diffractive optical element (DOE), diffuser, collimator lens and micro lens array. The acquisition brings Himax the master tooling capability to supplement the company’s wafer level optics (WLO) technology, which is critical in its efforts to offer 3D sensing total solutions. In addition, certain intellectual properties such as those related to true grey scale image and micro lens arrays will enable Himax to enter into new markets such as biomedical, computational camera and niche displays, and develop more sophisticated DOE and diffuser for future generation 3D sensing solutions.

Himax IGI Precision Ltd. (“Himax IGI”), a wholly owned subsidiary, has been established for the said acquisition. Himax IGI, located in Minneapolis, Minnesota, will continue to invest in the development of state-of-the-art nano 3D mastering technology and solutions.

Jordan Wu, President and CEO of Himax, said, “The team that are joining us along with the acquisition are true experts in what they do and are arguably the best talents in the world in the small but very technically challenging field of nano 3D mastering. We sometimes got frustrated by the less than satisfactory result in our WLO product development, unsure whether the issue was caused by the quality of the master or our own product design or manufacturing. By adding mastering know-how into our family, we can surely enhance the overall WLO product quality and shorten our development cycle. I am sure many of our innovation driven customers will be thrilled to hear that finally someone has taken the right move to bring nano 3D mastering, design and manufacturing of high precision optical structure product all under the same roof.”

Update: As written in comments, the company that sold the mastering technology to Himax is probably IGI, located in Minneapolis.

Espros Presents 3D Face Scan with 130um Depth Resolution

Espros publishes a video of 3D face scan of its CEO Beat De Coi. The epc660 sensor scan features:

- Simultaneous acquisition of 3D TOF and grayscale images

- Distance resolution 0.13mm

- 5 images per second with a 1 GHz ARM8 processor

Sunday, January 28, 2018

LeddarTech LiDAR Magazine

LeddarTech publishes The Automotive LiDAR Magazine 2018 with a lot of interesting data about LiDARs:

According to IHS Markit analyst Akhilesh Kona, mechanical LiDAR is the only type that has negative CAGR expectations, starting 2020:

"According to IHS Markit, MEMS-based scanning LiDAR will be the primary solution for meeting the high volumes and LiDAR performance requirements needed for autonomous vehicle deployments as early as 2020.

Once pure SSLs are ready, MEMS technology will be challenged. The success of MEMS technology for automotive LiDAR applications depends on price, and its ability to achieve performances in line with the specifications of other solid-state technologies.

On the semiconductor front, emitters are critical for range and class 1 eye-safe operation. Achieving long ranges with laser emitters between 800 nm to 940 nm while respecting eye-safe limits can be a challenge. Emitters above 1400 nm and CO2 lasers are under development for long-range operations with less power at eye-safe levels; however, higher costs, technology maturity and export regulations remain barriers to market entry.

IHS Markit believes that OEMs will not compromise on performance for L4 and L5 vehicles, and therefore will mostly adopt emitters with wavelengths above 1400 nm for longer-range applications. Emitters in the 800 nm to 940 nm range will mainly be used for short- and mid-range LiDARs."

According to IHS Markit analyst Akhilesh Kona, mechanical LiDAR is the only type that has negative CAGR expectations, starting 2020:

"According to IHS Markit, MEMS-based scanning LiDAR will be the primary solution for meeting the high volumes and LiDAR performance requirements needed for autonomous vehicle deployments as early as 2020.

Once pure SSLs are ready, MEMS technology will be challenged. The success of MEMS technology for automotive LiDAR applications depends on price, and its ability to achieve performances in line with the specifications of other solid-state technologies.

On the semiconductor front, emitters are critical for range and class 1 eye-safe operation. Achieving long ranges with laser emitters between 800 nm to 940 nm while respecting eye-safe limits can be a challenge. Emitters above 1400 nm and CO2 lasers are under development for long-range operations with less power at eye-safe levels; however, higher costs, technology maturity and export regulations remain barriers to market entry.

IHS Markit believes that OEMs will not compromise on performance for L4 and L5 vehicles, and therefore will mostly adopt emitters with wavelengths above 1400 nm for longer-range applications. Emitters in the 800 nm to 940 nm range will mainly be used for short- and mid-range LiDARs."

Saturday, January 27, 2018

TPSCo 2.8um Global Shutter Pixel

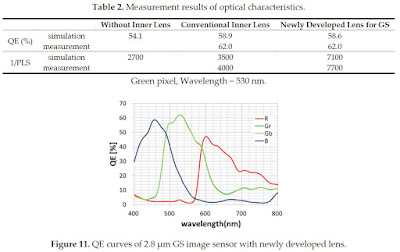

MDPI Special Issue on the 2017 International Image Sensor Workshop publishes TowerJazz-Panasonic paper "Development of Low Parasitic Light Sensitivity and Low Dark Current 2.8 μm Global Shutter Pixel" by Toshifumi Yokoyama, Masafumi Tsutsui, Masakatsu Suzuki, Yoshiaki Nishi, Ikuo Mizuno, and Assaf Lahav. This is probably the smallest size GS pixel offered as a silicon proven IP.

"We developed a low parasitic light sensitivity (PLS) and low dark current 2.8 μm global shutter pixel. We propose a new inner lens design concept to realize both low PLS and high quantum efficiency (QE). 1/PLS is 7700 and QE is 62% at a wavelength of 530 nm. We also propose a new storage-gate based memory node for low dark current. P-type implants and negative gate biasing are introduced to suppress dark current at the surface of the memory node. This memory node structure shows the world smallest dark current of 9.5 e−/s at 60 °C."

"We developed a low parasitic light sensitivity (PLS) and low dark current 2.8 μm global shutter pixel. We propose a new inner lens design concept to realize both low PLS and high quantum efficiency (QE). 1/PLS is 7700 and QE is 62% at a wavelength of 530 nm. We also propose a new storage-gate based memory node for low dark current. P-type implants and negative gate biasing are introduced to suppress dark current at the surface of the memory node. This memory node structure shows the world smallest dark current of 9.5 e−/s at 60 °C."

Occipital Raises $12M More

Techchrunch: San Francisco-based 3D camera company Occipital has closed $12m of the planned $15m Series C financing round. The round is being led by the Foundry Group. The company has raised about $33m to date.

CAOS HDR Camera Presentation

AutoSens publishes a video Nabeel A. Riza, University College Cork, Ireland, presentation "CAOS Smart Camera – Enabling extreme vision for automotive scenarios"

Friday, January 26, 2018

ST Reports 2017 Earnings, Sets 2018 Priorities

SeekingAlpha: ST reports its 2017 earnings, including the imaging business:

"In Imaging, 2017 was a year of continued success as revenue grew triple digit year-over-year. Our proprietary time-of-flight technology gained traction and we released our third generation of laser ranging sensors. Also our specialized 3D sensing technology ramped in volume for a major customer and we also won designs for depth sensing time-of-flight solution to support assisted driving with a tier-1 automotive supplier, certainly a priority for us.

...We also successfully ramped up an important time-of-flight product and specialized imaging sensor, addressing a 3D sensing application for a major customer. For 2018, we are focused on the further development of our next generation of imaging technologies. We will launch a new Single Photon Avalanche Diode, SPAD, in 40-nanometer technology, enabling a step change in performance of time-of-flight application. We will also secure the following generation with a 3D SPAD, further boosting the performance, scalability and enabling higher resolution time-of-flight sensors.

This is key for multiple markets from 3D sensing to LIDAR. We are also developing our next generation of global shutter technologies with significant performance improvements in near infrared light detection, as well as our Q2 [ph] image sensor with backlight and 3D integration for visible and non-visible light application."

The outgoung ST CEO Carlo Bozotti says "I believe for the next 10 years, we see imaging becoming more and more important in the automotive and we want to be there and I think we can be there with our technologies... I believe that with our imaging technology, we can certainly contribute to the new ways of autonomous driving, the LIDARs, the three sensors."

The company's 2017 earnings presentation also sets targets for 2018:

"In Imaging, 2017 was a year of continued success as revenue grew triple digit year-over-year. Our proprietary time-of-flight technology gained traction and we released our third generation of laser ranging sensors. Also our specialized 3D sensing technology ramped in volume for a major customer and we also won designs for depth sensing time-of-flight solution to support assisted driving with a tier-1 automotive supplier, certainly a priority for us.

...We also successfully ramped up an important time-of-flight product and specialized imaging sensor, addressing a 3D sensing application for a major customer. For 2018, we are focused on the further development of our next generation of imaging technologies. We will launch a new Single Photon Avalanche Diode, SPAD, in 40-nanometer technology, enabling a step change in performance of time-of-flight application. We will also secure the following generation with a 3D SPAD, further boosting the performance, scalability and enabling higher resolution time-of-flight sensors.

This is key for multiple markets from 3D sensing to LIDAR. We are also developing our next generation of global shutter technologies with significant performance improvements in near infrared light detection, as well as our Q2 [ph] image sensor with backlight and 3D integration for visible and non-visible light application."

The outgoung ST CEO Carlo Bozotti says "I believe for the next 10 years, we see imaging becoming more and more important in the automotive and we want to be there and I think we can be there with our technologies... I believe that with our imaging technology, we can certainly contribute to the new ways of autonomous driving, the LIDARs, the three sensors."

The company's 2017 earnings presentation also sets targets for 2018:

Sony IEDM Presentation on 3-Layer Stacking Process Flow

Nikkei overviews Sony IEDM 2017 presentation on 3-layer stacked process flow for the fast image sensor presented at 2017 ISSCC:

The processing flow is:

The numbers of TSVs connecting the pixel layer with DRAM is ~15,000 and DRAM with logic layer is ~20,000. Both of the TSVs have a diameter of 2.5μm and a pitch of 6.3μm. The 1/2.3-inch sensor has a resolution of 21.3MP and pixel size of 1.22um.

The processing flow is:

- The pixel, DRAM and logic wafers are manufactured using 90nm, 30nm and 40nm processes, respectively.

- The DRAM wafer and the logic wafer are joined together, and the thickness of the DRAM wafer is reduced to 3μm

- The DRAM and logic wafers are electrically connected with TSVs

- The stacked wafers of the DRAM and logic are joined to the pixel wafer

- The 3-layer wafer stack is thinned down to 130um and connected with TSVs

The numbers of TSVs connecting the pixel layer with DRAM is ~15,000 and DRAM with logic layer is ~20,000. Both of the TSVs have a diameter of 2.5μm and a pitch of 6.3μm. The 1/2.3-inch sensor has a resolution of 21.3MP and pixel size of 1.22um.

Imec Presents SWIR Hyperspectral Sensor

Imec announces its very first SWIR hyperspectral camera. The SWIR camera integrates CMOS-based spectral filters together with InGaAs-based imagers, thus combining the compact and low-cost capabilities of CMOS technology with the spectral range of InGaAs.

Semiconductor CMOS-based hyperspectral imaging filters, as designed and manufactured by imec for the past five years, have been utilized in a manner where they are integrated monolithically onto silicon-based CMOS image sensors, which has a sensitivity range from 400 – 1000 nm visible and near-IR (VNIR) range. However, it is expected that more than half of commercial multi and hyperspectral imaging applications need discriminative spectral data in the 1000 – 1700 nm SWIR range.

“SWIR range is key for hyperspectral imaging as it provides extremely valuable quantitative information about water, fatness, lipid and protein content of organic and inorganic matters like food, plants, human tissues, pharmaceutical powders, as well as key discriminatory characteristics about plastics, paper, wood and many other material properties,” commented Andy Lambrechts, program manager for integrated imaging activities at imec. “It was a natural evolution for imec to extend its offering into the SWIR range while leveraging its core capabilities in optical filter design and manufacturing, as well as its growing expertise in designing compact, low-cost and robust hyperspectral imaging system solutions to ensure this complex technology delivers on its promises.”

Imec’s initial SWIR range hyperspectral imaging cameras feature both linescan ‘stepped filter’ designs with 32 to 100 or more spectral bands, as well as snapshot mosaic solutions enabling the capture of 4 to 16 bands in real-time at video-rate speeds.

“The InGaAs imager industry is at a turning point,” explained Jerome Baron, business development manager of integrated imaging and vision systems at imec. “As the market recognizes the numerous applications of SWIR range hyperspectral imaging cameras beyond its traditional military, remote sensing and scientific niche fields, the time is right for organizations such as imec to enable compact, robust and low-cost hyperspectral imaging cameras in the SWIR range too. Imec’s objectives will be to advance this offering among the most price sensitive volume markets for this technology which include food sorting, waste management and recycling, industrial machine vision, precision agriculture and medical diagnostics.”

Semiconductor CMOS-based hyperspectral imaging filters, as designed and manufactured by imec for the past five years, have been utilized in a manner where they are integrated monolithically onto silicon-based CMOS image sensors, which has a sensitivity range from 400 – 1000 nm visible and near-IR (VNIR) range. However, it is expected that more than half of commercial multi and hyperspectral imaging applications need discriminative spectral data in the 1000 – 1700 nm SWIR range.

“SWIR range is key for hyperspectral imaging as it provides extremely valuable quantitative information about water, fatness, lipid and protein content of organic and inorganic matters like food, plants, human tissues, pharmaceutical powders, as well as key discriminatory characteristics about plastics, paper, wood and many other material properties,” commented Andy Lambrechts, program manager for integrated imaging activities at imec. “It was a natural evolution for imec to extend its offering into the SWIR range while leveraging its core capabilities in optical filter design and manufacturing, as well as its growing expertise in designing compact, low-cost and robust hyperspectral imaging system solutions to ensure this complex technology delivers on its promises.”

Imec’s initial SWIR range hyperspectral imaging cameras feature both linescan ‘stepped filter’ designs with 32 to 100 or more spectral bands, as well as snapshot mosaic solutions enabling the capture of 4 to 16 bands in real-time at video-rate speeds.

“The InGaAs imager industry is at a turning point,” explained Jerome Baron, business development manager of integrated imaging and vision systems at imec. “As the market recognizes the numerous applications of SWIR range hyperspectral imaging cameras beyond its traditional military, remote sensing and scientific niche fields, the time is right for organizations such as imec to enable compact, robust and low-cost hyperspectral imaging cameras in the SWIR range too. Imec’s objectives will be to advance this offering among the most price sensitive volume markets for this technology which include food sorting, waste management and recycling, industrial machine vision, precision agriculture and medical diagnostics.”

|

| Hyperspectral imaging in SWIR range with imec’s LS 100+ bands in 1.1 – 1.7µm range enables classification of nuts versus their nut’s shells. |

|

| Hyperspectral imaging in SWIR range with imec LS 100+ bands in 1.1 – 1.7um range enables classification of various different textiles. |

Facial Recognition Blocking

PRNewswire: While facial recognition is widely seen as a major step forward for the technology, some VCs invest into counter-face recognition development. Tel Aviv, Israel-based D-ID startup developing deep learning solution to protect identities from face recognition technologies announces the completion of a $4m seed round.

D-ID, which stands for de-identification, claims to have developed an solution that produces images, which are unrecognizable to facial recognition algorithms while keeping them indistinguishable to the human eye and is designed to be difficult for AI to overcome.

"Our biometric data is being collected and used irresponsibly by governments and organizations. D-ID is here to change that," says Gil Perry, Co-Founder and CEO of D-ID.

"The growing sophistication of facial recognition technologies are turning our faces into passwords. But these passwords aren’t protected and cannot be changed once compromised. D-ID offers a system that protects images from unauthorized, automated face recognition.

Images are processed in a ground-breaking way that causes face recognition algorithms to fail to identify the subject in the image, while maintaining enough similarity to the original image for humans not to notice the difference."

D-ID, which stands for de-identification, claims to have developed an solution that produces images, which are unrecognizable to facial recognition algorithms while keeping them indistinguishable to the human eye and is designed to be difficult for AI to overcome.

"Our biometric data is being collected and used irresponsibly by governments and organizations. D-ID is here to change that," says Gil Perry, Co-Founder and CEO of D-ID.

"The growing sophistication of facial recognition technologies are turning our faces into passwords. But these passwords aren’t protected and cannot be changed once compromised. D-ID offers a system that protects images from unauthorized, automated face recognition.

Images are processed in a ground-breaking way that causes face recognition algorithms to fail to identify the subject in the image, while maintaining enough similarity to the original image for humans not to notice the difference."

Thursday, January 25, 2018

Sony on Image Sensor Requirements for Autonomous Driving

AutosSens 2017 publishes Sony Director of Product Marketing for Automotive Sensing, Abhay Rai, presentation on requirements to image sensors for autonomous driving. The presentation also shows Sony image sensor division acquisitions and achievements:

Update: Sony publishes a web page dedicated to its automotive image sensor features.

Update: Sony publishes a web page dedicated to its automotive image sensor features.

Cybersecurity in Image Sensors

ON Semi publishes Giri Venkat talk at AutoSense 2017 on Cybersecurity Considerations for Autonomous Vehicles Sensors:

Quanta Imager for High-Speed Vision Applications

MDPI Special Issue Special Issue on the 2017 International Image Sensor Workshop publishes University of Edinburgh and ST paper "Single-Photon Tracking for High-Speed Vision" Istvan Gyongy, Neale A.W. Dutton, and Robert K. Henderson.

"Quanta Imager Sensors provide photon detections at high frame rates, with negligible read-out noise, making them ideal for high-speed optical tracking. At the basic level of bit-planes or binary maps of photon detections, objects may present limited detail. However, through motion estimation and spatial reassignment of photon detections, the objects can be reconstructed with minimal motion artefacts. We here present the first demonstration of high-speed two-dimensional (2D) tracking and reconstruction of rigid, planar objects with a Quanta Image Sensor, including a demonstration of depth-resolved tracking."

"Quanta Imager Sensors provide photon detections at high frame rates, with negligible read-out noise, making them ideal for high-speed optical tracking. At the basic level of bit-planes or binary maps of photon detections, objects may present limited detail. However, through motion estimation and spatial reassignment of photon detections, the objects can be reconstructed with minimal motion artefacts. We here present the first demonstration of high-speed two-dimensional (2D) tracking and reconstruction of rigid, planar objects with a Quanta Image Sensor, including a demonstration of depth-resolved tracking."

Panasonic Organic UV Image Sensor

MDPI Special Issue on the 2017 International Image Sensor Workshop publishes Panasonic paper "A Real-Time Ultraviolet Radiation Imaging System Using an Organic Photoconductive Image Sensor" by Toru Okino, Seiji Yamahira, Shota Yamada, Yutaka Hirose, Akihiro Odagawa, Yoshihisa Kato, and Tsuyoshi Tanaka.

"We have developed a real time ultraviolet (UV) imaging system that can visualize both invisible UV light and a visible (VIS) background scene in an outdoor environment. As a UV/VIS image sensor, an organic photoconductive film (OPF) imager is employed. The OPF has an intrinsically higher sensitivity in the UV wavelength region than those of conventional consumer Complementary Metal Oxide Semiconductor (CMOS) image sensors (CIS) or Charge Coupled Devices (CCD). As particular examples, imaging of hydrogen flame and of corona discharge is demonstrated. UV images overlapped on background scenes are simply made by on-board background subtraction. The system is capable of imaging weaker UV signals by four orders of magnitude than that of VIS background. It is applicable not only to future hydrogen supply stations but also to other UV/VIS monitor systems requiring UV sensitivity under strong visible radiation environment such as power supply substations."

"We have developed a real time ultraviolet (UV) imaging system that can visualize both invisible UV light and a visible (VIS) background scene in an outdoor environment. As a UV/VIS image sensor, an organic photoconductive film (OPF) imager is employed. The OPF has an intrinsically higher sensitivity in the UV wavelength region than those of conventional consumer Complementary Metal Oxide Semiconductor (CMOS) image sensors (CIS) or Charge Coupled Devices (CCD). As particular examples, imaging of hydrogen flame and of corona discharge is demonstrated. UV images overlapped on background scenes are simply made by on-board background subtraction. The system is capable of imaging weaker UV signals by four orders of magnitude than that of VIS background. It is applicable not only to future hydrogen supply stations but also to other UV/VIS monitor systems requiring UV sensitivity under strong visible radiation environment such as power supply substations."

Tuesday, January 23, 2018

ST 3D Presentations

ST publishes a couple of videos devoted to 3D image creation: Structure-from-Motion

Fusing data from camera, depth sensor and inertial sensors

FlightSense ToF Technology

Fusing data from camera, depth sensor and inertial sensors

FlightSense ToF Technology

ARM Automotive-Grade ISP

AutoSens publishes ARM Senior Manager of Image Quality, Alexis Lluis Gomez, presentation on automotive ISP:

Infineon and PMD Demo the World’s Smallest 3D ToF Module

Embedded Vision Alliance publishes Infineon and PMD demo of the world’s smallest 3D ToF module from the CES:

Sunday, January 21, 2018

Samsung Presents 3-Layer Stacked Image Sensor

Samsung mobile image sensor page unveils its 3-layer stacked image sensor capturing 1080p video at 480fps:

Thanks to DJ for the link!

Thanks to DJ for the link!

Samsung Applies for Under-Display Fingerprint Image Sensor Patent

Samsung patent application US20180012069 "Fingerprint sensor, fingerprint sensor package, and fingerprint sensing system using light sources of display panel" by Dae-young Chung, Hee-chang Hwang, Kun-yong Yoon, Woon-bae Kim, Bum-suk Kim, Min Jang, Min-chul Lee, and Jung-woo Kim proposes an optical fingerprint image sensor under an OLED display panel:

Saturday, January 20, 2018

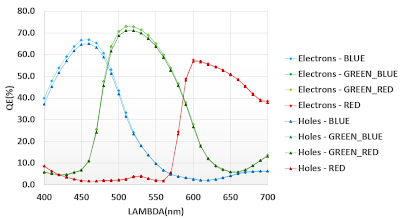

ST 115dB Linear HDR Pixel

MDPI Special Issue on IISW 2017 publishes ST paper "A 750 K Photocharge Linear Full Well in a 3.2 μm HDR Pixel with Complementary Carrier Collection" by Frédéric Lalanne, Pierre Malinge, Didier Hérault, Clémence Jamin-Mornet, and Nicolas Virollet.

"The native HDR pixel concept based on a parallel electron and hole collection for, respectively, a low signal level and a high signal level is particularly well-suited for this performance challenge. The theoretical performance of this pixel is modeled and compared to alternative HDR pixel architectures. This concept is proven with the fabrication of a 3.2 μm pixel in a back-side illuminated (BSI) process including capacitive deep trench isolation (CDTI). The electron-based image uses a standard 4T architecture with a pinned diode and provides state-of-the-art low-light performance, which is not altered by the pixel modifications introduced for the hole collection. The hole-based image reaches 750 kh+ linear storage capability thanks to a 73 fF CDTI capacitor. Both images are taken from the same integration window, so the HDR reconstruction is not only immune to the flicker issue but also to motion artifacts."

"The native HDR pixel concept based on a parallel electron and hole collection for, respectively, a low signal level and a high signal level is particularly well-suited for this performance challenge. The theoretical performance of this pixel is modeled and compared to alternative HDR pixel architectures. This concept is proven with the fabrication of a 3.2 μm pixel in a back-side illuminated (BSI) process including capacitive deep trench isolation (CDTI). The electron-based image uses a standard 4T architecture with a pinned diode and provides state-of-the-art low-light performance, which is not altered by the pixel modifications introduced for the hole collection. The hole-based image reaches 750 kh+ linear storage capability thanks to a 73 fF CDTI capacitor. Both images are taken from the same integration window, so the HDR reconstruction is not only immune to the flicker issue but also to motion artifacts."

Friday, January 19, 2018

Espros LiDAR Sensor Presentation at AutoSens 2017

AutoSens publishes a video of Espros CEO Beat De Coi presentation of a pulsed ToF sensor in October 2017:

Intel Starts Shipments of D400 RealSense Cameras

Intel begins shipping two RealSense D400 Depth Cameras from its next-generation D400 product family: the D415 and D435, based on previously announced D400 3D modules.

Intel is also offering its D4 and D4M (mobile version) depth processor chips for stereo cameras:

|

| RealSense D415 |

Intel is also offering its D4 and D4M (mobile version) depth processor chips for stereo cameras:

Thursday, January 18, 2018

ams Bets on 3D Sensing

SeekingAlpha publishes an analysis of the recent ams business moves:

"ams has assembled strong capabilities in 3D sensing - one of the strongest emerging new opportunities in semiconductors. 3D sensing can detect image patterns, distance, and shape, allowing for a wide range of uses, including facial recognition, augmented reality, machine vision, robotics, and LIDAR.

Although ams is not currently present in the software side, the company has recently begun investing in software development as a way to spur future adoption. Ams has also recently begun a collaboration with Sunny Optical, a leading Asian sensor manufacturer, to take advantage of Sunny's capabilities in module manufacturing.

At this point it remains to be seen how widely adopted 3D sensing will be; 3D sensing could become commonplace on all non-entry level iPhones in a short time and likewise could gain broader adoption in Android devices. What's more, there is the possibility of adding 3D sensing to other consumer devices like tablets, not to mention adding 3D sensing to the back of phones in future models."

"ams has assembled strong capabilities in 3D sensing - one of the strongest emerging new opportunities in semiconductors. 3D sensing can detect image patterns, distance, and shape, allowing for a wide range of uses, including facial recognition, augmented reality, machine vision, robotics, and LIDAR.

Although ams is not currently present in the software side, the company has recently begun investing in software development as a way to spur future adoption. Ams has also recently begun a collaboration with Sunny Optical, a leading Asian sensor manufacturer, to take advantage of Sunny's capabilities in module manufacturing.

At this point it remains to be seen how widely adopted 3D sensing will be; 3D sensing could become commonplace on all non-entry level iPhones in a short time and likewise could gain broader adoption in Android devices. What's more, there is the possibility of adding 3D sensing to other consumer devices like tablets, not to mention adding 3D sensing to the back of phones in future models."

Wednesday, January 17, 2018

RGB to Hyperspectral Image Conversion

Ben Gurion University, Israel, researches implement a physically impossible thing - converting regular RGB consumer camera images into hyperspectral ones, purely by software. Their paper "Sparse Recovery of Hyperspectral Signal from Natural RGB Images" by Boaz Arad and Ohad Ben-Shahar presented at European Conference on Computer Vision (ECCV) in Amsterdam, The Netherlands, in October 2016, says:

"We present a low cost and fast method to recover high quality hyperspectral images directly from RGB. Our approach first leverages hyperspectral prior in order to create a sparse dictionary of hyperspectral signatures and their corresponding RGB projections. Describing novel RGB images via the latter then facilitates reconstruction of the hyperspectral image via the former. A novel, larger-than-ever database of hyperspectral images serves as a hyperspectral prior. This database further allows for evaluation of our methodology at an unprecedented scale, and is provided for the benefit of the research community. Our approach is fast, accurate, and provides high resolution hyperspectral cubes despite using RGB-only input."

"The goal of our research is the reconstruction of the hyperspectral data from natural images from their (single) RGB image. Prima facie, this appears a futile task. Spectral signatures, even in compact subsets of the spectrum, are very high (and in the theoretical continuum, infinite) dimensional objects while RGB signals are three dimensional. The back-projection from RGB to hyperspectral is thus severely underconstrained and reversal of the many-to-one mapping performed by the eye or the RGB camera is rather unlikely. This problem is perhaps expressed best by what is known as metamerism – the phenomenon of lights that elicit the same response from the sensory system but having different power distributions over the sensed spectral segment.

Given this, can one hope to obtain good approximations of hyperspectral signals from RGB data only? We argue that under certain conditions this otherwise ill-posed transformation is indeed possible; First, it is needed that the set of hyperspectral signals that the sensory system can ever encounter is confined to a relatively low dimensional manifold within the high or even infinite-dimensional space of all hyperspectral signals. Second, it is required that the frequency of metamers within this low dimensional manifold is relatively low. If both conditions hold, the response of the RGB sensor may in fact reveal much more on the spectral signature than first appears and the mapping from the latter to the former may be achievable.

Interestingly enough, the relative frequency of metameric pairs in natural scenes has been found to be as low as 10^−6 to 10^−4. This very low rate suggests that at least in this domain spectra that are different enough produce distinct sensor responses with high probability.

The eventual goal of our research is the ability to turn consumer grade RGB cameras into a hyperspectral acquisition devices, thus permitting truly low cost and fast HISs."

"We present a low cost and fast method to recover high quality hyperspectral images directly from RGB. Our approach first leverages hyperspectral prior in order to create a sparse dictionary of hyperspectral signatures and their corresponding RGB projections. Describing novel RGB images via the latter then facilitates reconstruction of the hyperspectral image via the former. A novel, larger-than-ever database of hyperspectral images serves as a hyperspectral prior. This database further allows for evaluation of our methodology at an unprecedented scale, and is provided for the benefit of the research community. Our approach is fast, accurate, and provides high resolution hyperspectral cubes despite using RGB-only input."

"The goal of our research is the reconstruction of the hyperspectral data from natural images from their (single) RGB image. Prima facie, this appears a futile task. Spectral signatures, even in compact subsets of the spectrum, are very high (and in the theoretical continuum, infinite) dimensional objects while RGB signals are three dimensional. The back-projection from RGB to hyperspectral is thus severely underconstrained and reversal of the many-to-one mapping performed by the eye or the RGB camera is rather unlikely. This problem is perhaps expressed best by what is known as metamerism – the phenomenon of lights that elicit the same response from the sensory system but having different power distributions over the sensed spectral segment.

Given this, can one hope to obtain good approximations of hyperspectral signals from RGB data only? We argue that under certain conditions this otherwise ill-posed transformation is indeed possible; First, it is needed that the set of hyperspectral signals that the sensory system can ever encounter is confined to a relatively low dimensional manifold within the high or even infinite-dimensional space of all hyperspectral signals. Second, it is required that the frequency of metamers within this low dimensional manifold is relatively low. If both conditions hold, the response of the RGB sensor may in fact reveal much more on the spectral signature than first appears and the mapping from the latter to the former may be achievable.

Interestingly enough, the relative frequency of metameric pairs in natural scenes has been found to be as low as 10^−6 to 10^−4. This very low rate suggests that at least in this domain spectra that are different enough produce distinct sensor responses with high probability.

The eventual goal of our research is the ability to turn consumer grade RGB cameras into a hyperspectral acquisition devices, thus permitting truly low cost and fast HISs."

Subscribe to:

Posts (Atom)