Monday, March 23, 2026

STMicro Nvidia collab

STMicroelectronics to integrate ST sensors, microcontrollers, and motor control solutions with NVIDIA robotics ecosystem to help developers design, train, and deploy humanoid robots and other physical AI systems with higher efficiency, reliability, and scalability

First steps with integration of Leopard Imaging stereo depth camera enabled by ST with the NVIDIA Holoscan Sensor Bridge, and the addition of the high-fidelity sim-to-real model of ST IMU in NVIDIA Isaac Sim ecosystem

STMicroelectronics (NYSE: STM), a global semiconductor leader serving customers across the spectrum of electronics applications, today announced the acceleration of global development and adoption of physical AI systems, including humanoid, industrial, service and healthcare robots. ST is integrating its comprehensive portfolio for advanced robotics, into the reference set of components compatible with the NVIDIA Holoscan Sensor Bridge (HSB). In parallel, high-fidelity NVIDIA Isaac Sim models of ST components are being integrated into both companies’ robotics ecosystems to support faster, more accurate sim-to-real research and development. The first deliverables available to developers today include the integration of Leopard’s depth camera enabled by ST with the NVIDIA HSB and the high-fidelity model of an ST IMU into NVIDIA’s Isaac Sim ecosystem.

“ST is well engaged within the robotics community, providing robust support and a well-established ecosystem,” said Rino Peruzzi, Executive Vice President, Sales & Marketing, Americas & Global Key Account Organization at STMicroelectronics. “Our collaboration with NVIDIA aims to unleash the next wave of cutting-edge robotics innovation with developer and customer experience streamlined at every step, from the inception of AI algorithms to the seamless integration of sensors and actuators. This will accelerate the evolution of sophisticated AI-driven physical platforms.”

“Accelerating the development of next-generation autonomous systems requires high-fidelity simulation and seamless hardware integration to bridge the gap between virtual training and real-world deployment,” said Deepu Talla, Vice President of Robotics and Edge AI at NVIDIA. “The integration of STMicroelectronics’ sensor and actuator technologies with NVIDIA Isaac Sim, Holoscan Sensor Bridge and Jetson platforms provides developers with a unified foundation to build, simulate and deploy physical AI at scale.”

Simplifying sensor and actuator integration with the Holoscan Sensor Bridge

With the NVIDIA HSB, developers can unify, standardize, synchronize, and streamline data acquisition and logging from multiple ST sensors and actuators, a critical foundation for building high fidelity NVIDIA Isaac models, accelerating learning, and minimizing the sim to real gap.

The goal is to simplify the process of connecting ST sensors and actuators to NVIDIA Jetson platforms through pre-integrated solutions for the combination of STM32 MCUs, advanced sensors (including IMUs, imagers, and ToF devices) and motor‑control solutions, particularly for humanoid robot designs. Leopard Imaging’s stereo depth camera for robots is the perfect example. Using ST imaging, depth and motion-sensing technologies, it is expected to support a broad wave of designs across Physical AI OEMs, academic research groups and the industrial robotics community.

Reducing cost, complexity challenges with high-fidelity modeling for Omniverse Isaac

Advanced robotics developers face high development costs, in addition to modeling challenges. High‑fidelity simulations with extensive randomization demand substantial GPU and CPU resources and large datasets. Selecting which parameters to randomize, and over what ranges, requires deep domain expertise. Poor choices can result in unrealistic scenarios or inefficient training. Finally, excessive variability can confuse models, slow convergence, and degrade real‑world performance when randomization no longer reflects plausible conditions.

ST and NVIDIA’s objective is to provide accurate, hardware-calibrated models for the comprehensive portfolio of ST components matching the requirements of advanced robotics. Following the availability of the first model of an IMU, ST is working to bring developers models of ToF sensors, actuators and other ICs derived from benchmark data collected on real ST hardware, using ST tools to capture accurate parameters and realistic behavior, resulting in models optimized to NVIDIA’s Isaac Sim ecosystem. NVIDIA HSB is being integrated into ST’s toolchain collaboratively.

As a result, ST and NVIDIA envision that more accurate models will significantly improve robot learning. With models that closely mirror real-world device behavior, robots can learn from simulations that better reflect actual conditions, shortening training cycles and lowering the cost of building and refining humanoid robotics applications.

Thursday, March 19, 2026

Is Huawei developing its own image sensor?

Link: https://www.huaweicentral.com/huaweis-first-1-inch-camera-sensor-is-ready-with-new-imaging-tech/

Huawei’s first 1-inch camera sensor is ready with new imaging tech

...

This new sensor is a 50MP camera with a 1/1.3-inch format. It uses an RYYB (Red, Yellow, Yellow, Blue) filter array that allows 40% more light to reach the sensor.RYYB sensors enhance low-light performance with noise reduction in dark scenarios and improve the overall dynamic range to offer brighter and more detailed images even under challenging conditions.

On the flip side, the 1-inch camera sensor is paired with DCG and HDR technology. DCG refers to Dual Conversion Gain technology that boosts the image quality by capturing a single exposure with two different gains – high and low simultaneously.

...

Tuesday, March 17, 2026

Pointcloud's 4D FMCW lidar paper published in Nature

Settembrini et al from Pointcloud GmbH (Zürich, Switzerland) published a paper titled "A large-scale coherent 4D imaging sensor" in Nature magazine.

Link: https://www.nature.com/articles/s41586-026-10183-6

Abstract: Detailed and accurate 3D mapping of dynamic environments is essential for machines to interface with their surroundings and for human–machine interaction. Although considerable effort has been made to create the equivalent of the complementary metal–oxide–semiconductor (CMOS) image sensor for the 3D world, scalable, high-performance, reliable solutions have proven elusive. Focal plane array (FPA) sensors using frequency-modulated continuous-wave (FMCW) light detection and ranging (LiDAR) have shown potential to meet all of these requirements and also provide direct measurement of radial velocity as a fourth dimension. Previous demonstrations, although promising, have not achieved the simultaneous scale and performance required by commercial applications. Here we present a large-scale, coherent LiDAR FPA enabled by comprehensive chip-scale optoelectronic integration. A 4D imaging camera is built around the FPA and used to acquire point clouds. At the core is a 352 × 176-pixel 2D FMCW LiDAR FPA comprising more than 0.6 million photonic components, all integrated on-chip together with their associated electronics. This represents a five times increase in pixel count with respect to previous demonstrations. The pixel architecture combines the outbound and inbound optical paths within the pixel in a monostatic configuration, together with coherent detectors and electronics. Frequency-modulated light is directed sequentially to groups of pixels by in-plane thermo-optic switches with integrated electronics for driving and calibration. An integrated serial digital interface controls both optical switching and readout synchronously. Point clouds of objects ranging from 4 to 65 m with per-pixel integration time compatible with frame rates from 3 to 15 frames per second (fps) are shown. This result demonstrates the capabilities of FMCW LiDAR FPA sensors as enablers of ubiquitous, low-cost, compact coherent 4D imaging cameras.

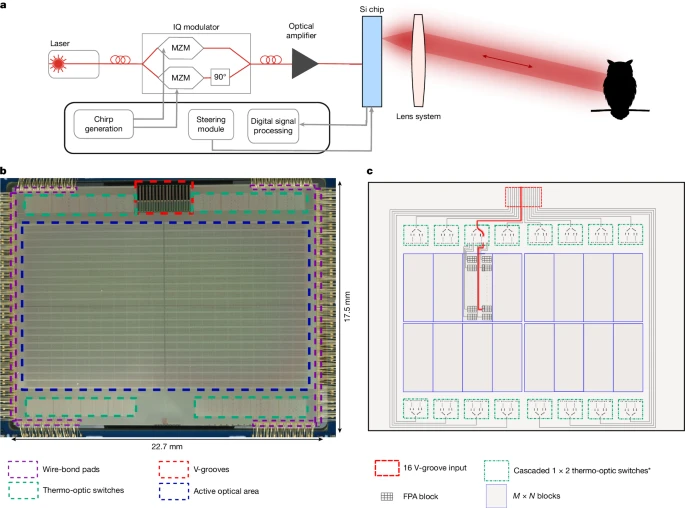

a, The architecture contains an imaging chip that simultaneously functions as both transmitter and receiver. The light path from the chip to target (owl) is determined by the optical lens system. b, Microscope image of the chip, showing the active optical area and thermo-optical switching network. c, Schematic of the light path selection process on the imaging chip. *Some of the outputs of the first-level switches are not connected.

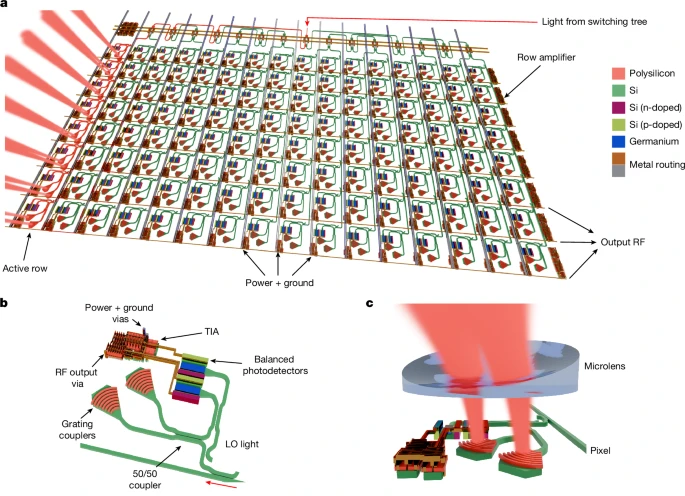

a, Schematic representation of the coherent FPA block. The modulated light is routed to a single 8-pixel row, illuminating a subsection of the scene. The in-plane rotation and emission angle of each grating coupler pair are adjusted to enhance detection efficiency. b, Schematic image of a single coherent pixel, including grating coupler pairs, balanced germanium photodetectors and an integrated TIA. c, Schematic image of a single element of the concave microlens array deposited on-chip to increase light-coupling efficiency.

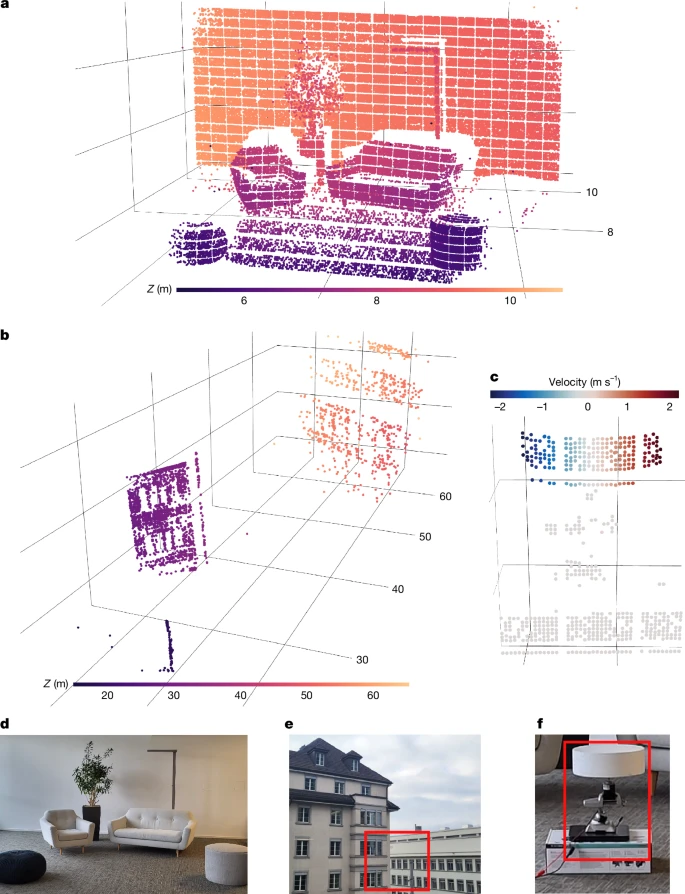

a, Point cloud of an office scene (6–11 m) obtained by a single acquisition using the entire imaging array with a f = 35 mm focal length lens. b, Point cloud from two buildings located 20–65 m away, obtained by coherently averaging four acquisitions with a f = 50 mm focal length lens. c, Velocity-annotated point cloud of the disc that is rotating about its vertical axis, obtained using a single acquisition with a f = 35 mm focal length lens. d–f, Photographs of the scenes in a–c. Red rectangles denote the regions of interest.

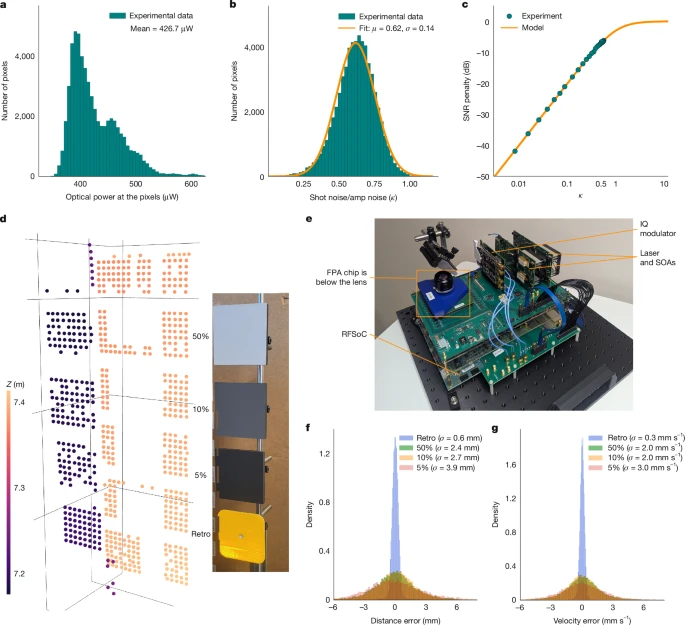

a, Distribution of optical power levels arriving at each pixel, measured by integrated monitor photodiodes. b, Distribution of the measured shot-noise to amplifier-noise magnitude ratio κ over the array. The mean value of κ is 0.62. c, SNR loss as a function of the shot-noise to amplifier-noise ratio. Operating at κ = 0.62 results in a SNR loss of −5.6 dB below a shot-noise-limited system. d, Point cloud obtained with coherent averaging of three frames from the stationary calibrated targets at 7.2 m and photograph of the three calibrated targets with known Lambertian reflectivities and a retroreflector (‘Retro’). e, Photograph of the entire system. SOA, semiconductor optical amplifier. f,g, Distribution of distance (f) and velocity (g) measurement errors.

Monday, March 09, 2026

Paper on FSI vs BSI for SPADs

In a paper titled "" Eom et al. from Yonsei University, EPFL, Sungkyunkwan University and TruPixel, Inc. write:

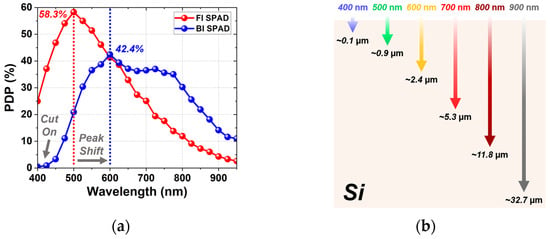

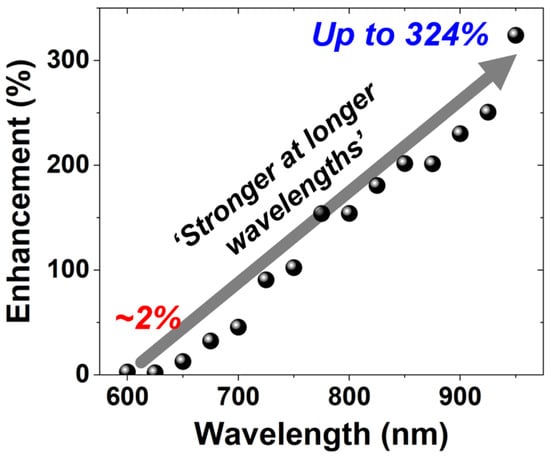

This paper presents a process-controlled study of illumination engineering in single-photon avalanche diodes (SPADs) fabricated in a 110 nm standard CMOS image sensor (CIS) technology. Front-illuminated (FI) and back-illuminated (BI) SPADs were implemented with identical front-end-of-line (FEOL) structures, including the junction and guard-ring configurations, enabling the isolation of the effects of illumination direction and back-end-of-line (BEOL) configuration without modifying the junction structure. Through TCAD simulations and comprehensive experimental characterizations, including current–voltage, light-emission, dark count rate (DCR), photon detection probability (PDP), and timing-jitter measurements, we systematically analyze the performance trade-offs introduced by the BI configuration. The BI SPAD exhibits enhanced near-infrared PDP and a broader spectral response due to its deeper absorption region and the incorporation of a metal reflector, while maintaining identical avalanche characteristics, as evidenced by an unchanged 72 ps full-width-at-half-maximum (FWHM) timing jitter. However, the backside illumination increases the diffusion tail, indicating a trade-off between near-infrared sensitivity and diffusion-related timing performance. These results provide design guidelines for optimizing SPAD performance through illumination-direction and BEOL engineering while preserving the FEOL design and demonstrate a useful approach for SPAD integration in standard CMOS technology.

Link: https://www.mdpi.com/1424-8220/26/5/1664

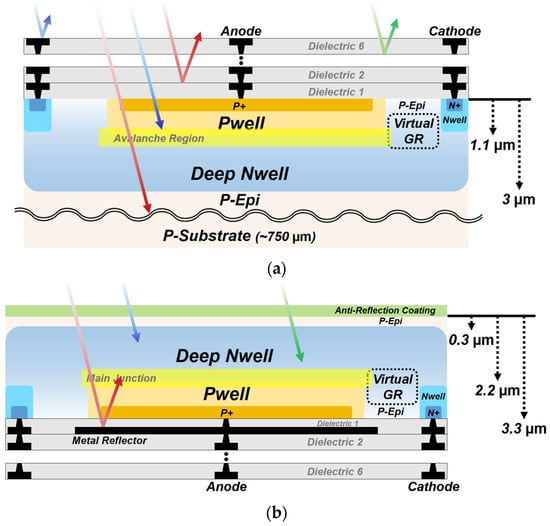

Figure 1. Cross-sections of the SPADs with different illumination configurations: (a) FI SPAD; (b) BI SPAD.

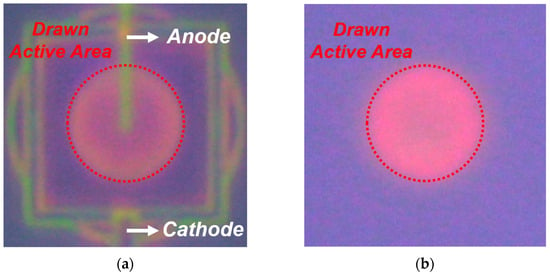

Figure 5. LET results of the SPADs at Vex = 3 V, visualizing avalanche emission across the active area: (a) FI SPAD; (b) BI SPAD.

Figure 9. PDP spectra of the FI and BI SPADs at Vex = 3 V, together with the optical penetration depths of different wavelengths in silicon: (a) PDP spectra from 400 to 950 nm. (b) Optical penetration depths in silicon.

Figure 10. Wavelength-dependent PDP enhancement of the BI SPAD over the FI SPAD.

Thursday, March 05, 2026

Teledyne e2v announces camera with angle-sensitive pixels

From globalnewswire: https://www.globenewswire.com/news-release/2026/03/05/3249875/0/en/Teledyne-e2v-Introduces-Perciva-5D-Camera-Occlusion-free-3D-Vision-for-Industrial-Retail-and-Robotic-Imaging.html

Teledyne e2v Introduces Perciva™ 5D Camera: Occlusion-free 3D Vision for Industrial, Retail, and Robotic Imaging

GRENOBLE, France, March 05, 2026 (GLOBE NEWSWIRE) -- Teledyne e2v, a Teledyne Technologies [NYSE: TDY] company and global innovator of imaging solutions, announces the launch of the Perciva™ 5D camera, a breakthrough imaging innovation designed to make high-quality short-range 3D vision cost-effective, reliable, and easy to integrate.

Most industrial cameras only capture 2D images, yet many applications increasingly require depth perception at close and very-close distances. Perciva 5D delivers this capability through a unique Angular Sensitive Pixel technology and advanced on-board processing, enabling real-time 2D and 3D image fusion at the calibrated working distance range. Perciva 5D also features a powerful Neural Processing Unit (NPU), enabling Artificial Intelligence models to run on-device and be customized to each customer’s specific requirements.

Perciva 5D generates 2D and 3D data from a single CMOS sensor, free from optical occlusion, producing time-aligned 2D frames alongside pixel-aligned 3D depth maps. With comprehensive 3D processing built directly into the camera, users benefit from immediate depth maps or point-cloud outputs. Perciva 5D operates using ambient light, indoors or outdoors, eliminating the need for an external NIR source while maintaining reliable performance and minimizing overall system costs. Designed for challenging environments, it offers plug-and-play integration through its GenICam-compliant, GigE Vision interface and robust IP6x-rated housing with industrial M12 connectors.

Factory calibrated and weighing just 230 grams, Perciva 5D operates at less than 5 W, and is ideal for robotics (arms, cobots and humanoids), retail self-checkout solutions, and 3D industrial process monitoring. It supports user-adjustable frame rates or triggered acquisition and multiple power options. Using GenDC / GenTL the camera integrates seamlessly with Teledyne’s Spinnaker® 4 API and SpinView® for 2D / 3D visualisation, as well as leading machine-vision software platforms.

Perciva 5D will be showcased during Embedded World, Nuremberg, Germany, from 10-12 March 2026. Visit Teledyne at stand 2-541 in Hall 2 or contact us online for more information.

Documentation, samples, and software for evaluation or development are available upon request.

Tuesday, March 03, 2026

New Leadership at Bharat Semi

Dr Boyd Fowler has joined as the Chief Technology Officer at Bharat Semi, an Indian sensor/semiconductor startup.

Here's some text from their recent post:

One of the world's foremost leaders in sensors, Boyd brings deep expertise in CMOS and infrared image sensors, semiconductor architecture, and large-scale engineering strategy. He played a foundational role in developing the technology that enabled commercial image sensors. Additionally, he helped develop the first pinned photodiodes at the world's largest fab. Before joining Bharat Semi, Boyd served as CTO at OMNIVISION Technologies and held senior technology leadership roles at Google, Fairchild Imaging, and BAE Systems. He is known for defining long-term technology vision and leading complex engineering organizations where discipline, scale, and execution are critical.

Link: https://www.bsemis.com/

PS: Bharat Semi should not be confused with Bharath Semiconductors, Bharat Semitech, or Bharat Semiconductor Society.

Friday, February 27, 2026

LOFIC CIS paper

Kitajima et al. from Ritsumeikan University published a paper titled "An LOFIC Image Sensor Readout Circuit with an On-Chip HDR Merger Achieving 36.5% Area and 14.9% Power Reduction" as an extended version of work presented at 2025 IEEE Sensors conference.

Abstract: For sensing applications, a complementary metal oxide semiconductor (CMOS) image sensor (CIS) with a lateral overflow integration capacitor (LOFIC) is in high demand. The LOFIC CIS can achieve high-dynamic-range (HDR) imaging by combining a low-conversion-gain (LCG) signal for large maximum signal electrons and a high-conversion-gain (HCG) signal for a low electron-referred noise floor. However, the LOFIC CIS faces challenges regarding the power consumption and circuit area when reading both HCG and LCG signals. To address these issues, this study proposes a readout circuit composed of area-efficient MOS capacitors using a folding DC operating point technique and an in-column signal selector for an on-chip HDR merger of HCG and LCG signals. A 10-bit test chip was fabricated with a 0.18µm CMOS process with MOS capacitors. The fabricated chip maintains high linearity, achieving an integral nonlinearity (INL) of +7.17/−6.93 LSB for the HCG signal and +7.95/−7.41 LSB for the LCG signal. Furthermore, the proposed design achieves a 14.92% reduction in the average power consumption of the total readout circuit and a 36.5% reduction in the readout circuit area.

Link: https://www.mdpi.com/2674-0729/5/1/8

The conference proceedings version is available here: https://ieeexplore.ieee.org/document/11331086

Wednesday, February 25, 2026

Conference List - August 2026

The 3rd International Conference on AI Sensors and Transducers - 2–7 August 2026 - Jeju, South Korea - Website

BNL Physics and Detector Simulation Meeting - 11 August 2026 - Zoom online - Website

International Conference on Particle Accelerators and Detector Technologies (ICPADT-26) - 21-22 August 2026 - Washington, DC, USA - Website

SPIE Optics & Photonics - 23-27 August 2026 - San Diego, California, USA - Website

IOP Photon 2026 - 31 August-3 September 2026 - Newcastle-upon-Tyne, UK - Website

If you know about additional local conferences, please add them as comments.

Return to Conference List index

QVGA SPAD dToF sensor paper

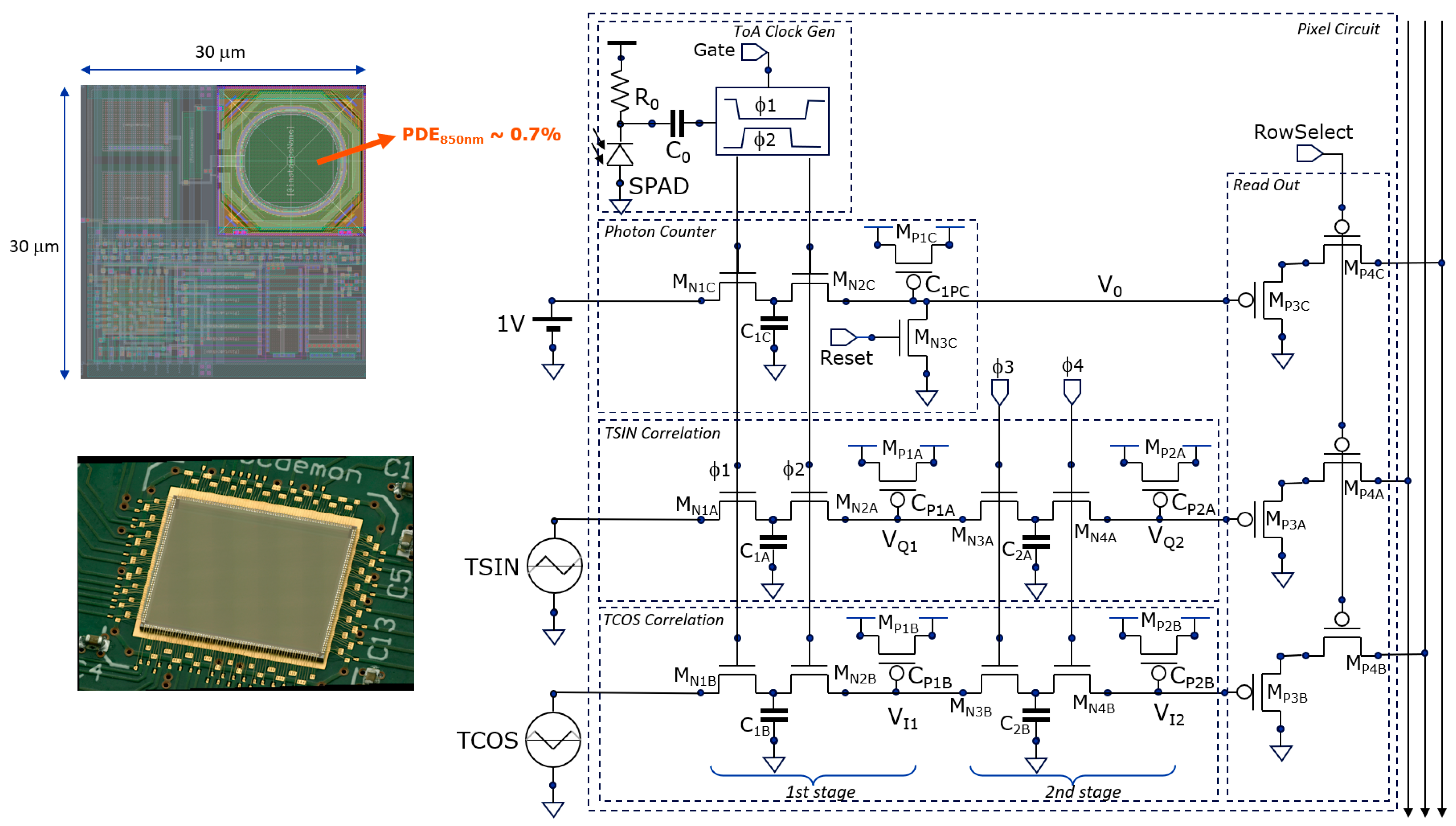

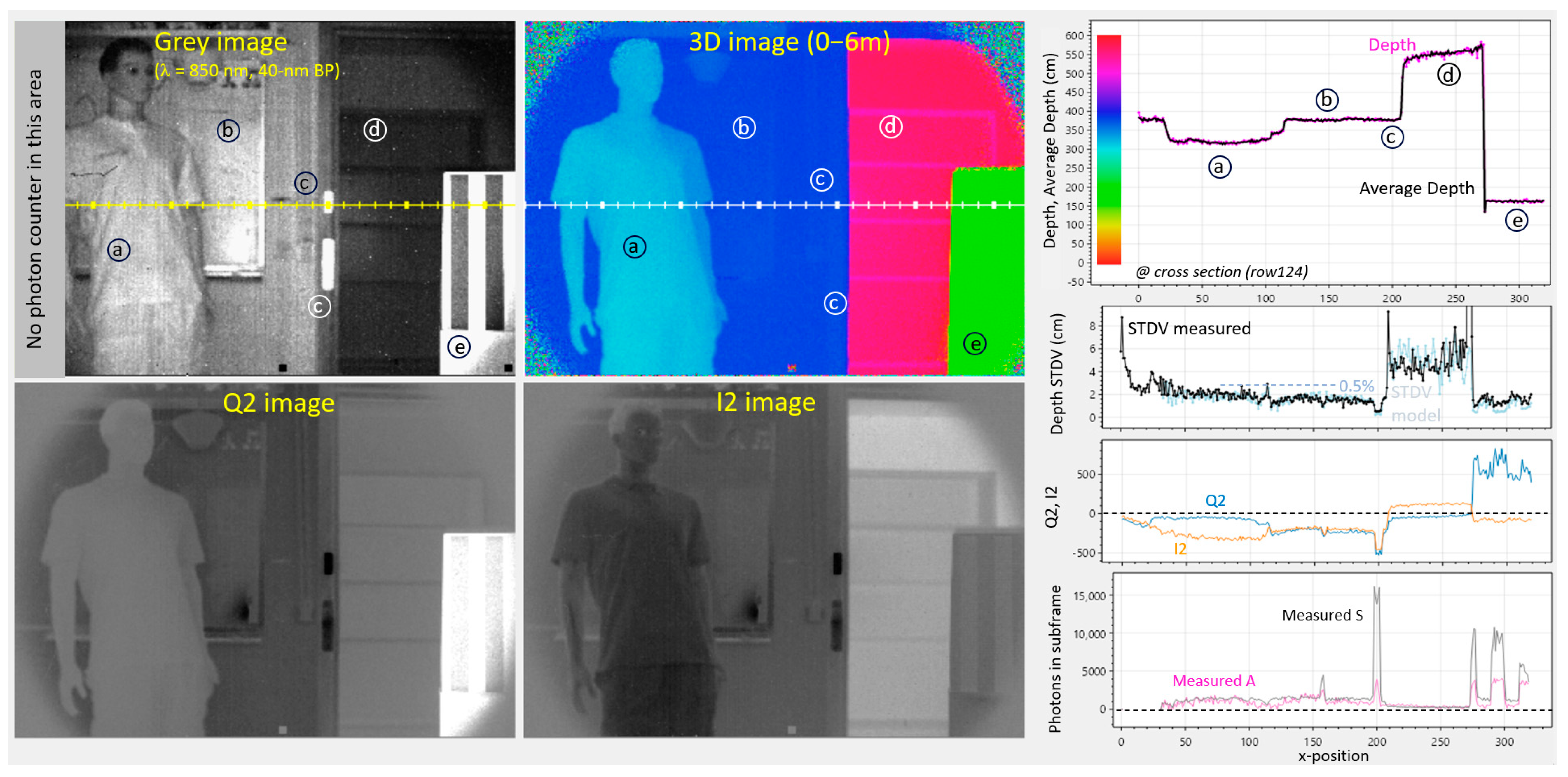

Kuijk et al from Vrije University and Sony Depthsensing Belgium published a paper titled "320 × 240 SPAD Direct Time-of-Flight Image Sensor and Camera Based on In-Pixel Correlation and Switched-Capacitor Averaging" in the special IISW issue of Sensors journal.

Abstract: Correlation-Assisted Direct Time-of-Flight (CA-dToF) is demonstrated for the first time on a large 320 × 240-pixel SPAD array sensor that includes on-chip high-speed timing support circuitry. SPAD events are processed in-pixel, avoiding data communication over the array and/or storage bottlenecks. This is accomplished by sampling two orthogonal triangle waves that are synchronized with short light pulses illuminating the scene. Using small switched-capacitor circuits, exponential moving averaging (EMA) is applied to the sampled voltages, delivering two analog voltages (VQ2, VI2). These contain the phase delay, or the time of flight between the light pulse and photon’s time of arrival (ToA). Uncorrelated ambient photons and dark counts are averaged out, leaving only their associated shot noise impacting the phase precision. The QVGA camera allows for capturing depth-sense images with sub-cm precision over a 6 m range of detection, even with a small PDE of 0.7% at an 850 nm wavelength.

Open access DOI link: https://doi.org/10.3390/s25216772

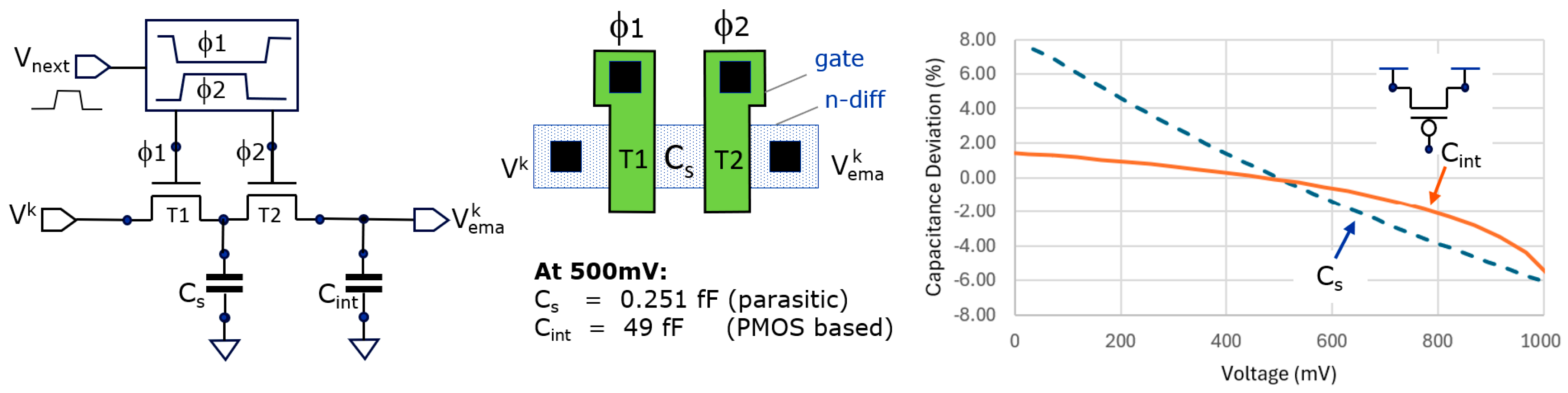

Figure 3. The practical EMA implementation consists of generating non-overlapping clocks (f1 and f2) in response to an edge transition from Vnext, driving the gates of two NMOS transistors (left); the parasitic capacitance of the substrate diffusion diode between the two transistors forms Cs (center); the non-linear behavior of Cs and Cint form spice simulation and are shown on the right.

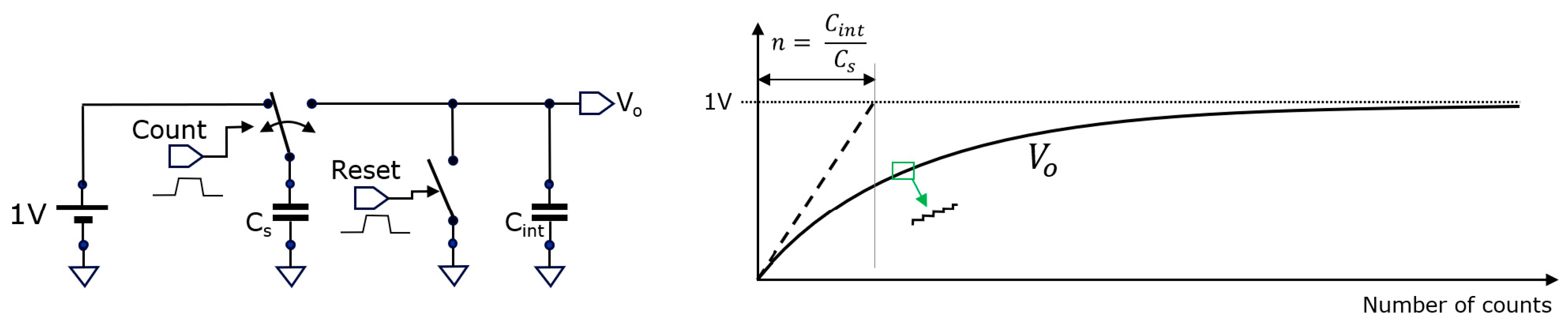

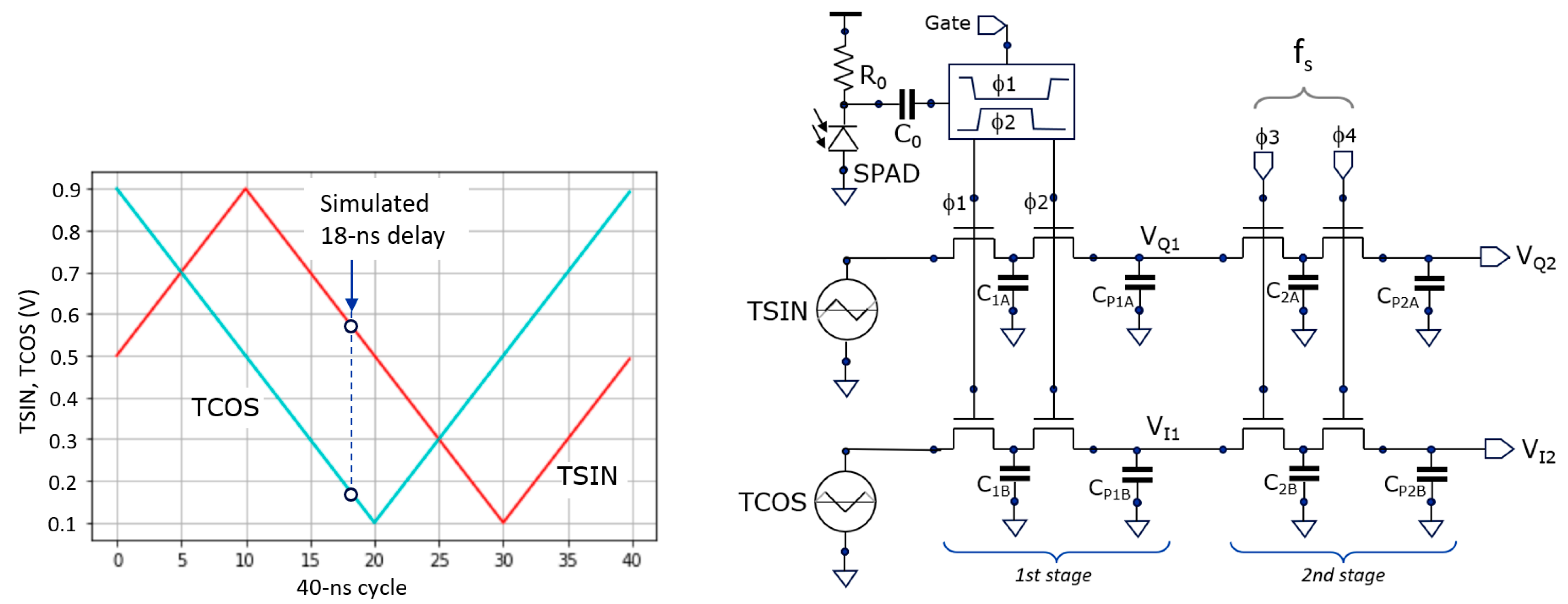

Figure 5. An analog counter based on a switched-capacitor principle, useful for counting events like incident photons. When zooming-in, the step-like behavior becomes visible (in green).Figure 6. The correlation functions TCOS and TSIN (left) and the schematic (right) of the two-stage averaging system for correlating the incident ToAs of photons with these functions.

Figure 8. The pixel circuit has a non-overlapping clock generator, a photon counter, and two-stage averaging for the sampled triangular TSIN and TCOS signals.

Figure 11. Demodulation using (0°, 180°) phases at 25 MHz: Gray, Q2, I2, and 3D images. Shown right are cross-sections from the image’s row 124, giving more quantitative results, including measured and modeled depth STDV. Color scale is present in the depth-graph (upper right). Ambient is 1 klux at the whiteboard (b) and 2 klux at the box (e).Wednesday, February 18, 2026

ForzaFAST581high-speed global-shutter CIS paper

Agarwal et al. from Forza Silicon (Pasadena, CA) published a paper titled "High-Frame-Rate Low-Noise Global Shutter CMOS Image Sensor for High-Speed Machine Vision" in the IISW special issue of MDPI Sensors journal.

Open access link: https://www.mdpi.com/1424-8220/26/4/1117

Abstract: In this paper we present a low-noise, high-frame-rate global shutter CMOS image sensor with UHD resolution (3840 × 2160), targeting high-speed machine vision applications. The sensor (ForzaFAST581) supports video capture at up to 1141 FPS at 12 bits and 1694 FPS at 8 bits at full resolution, consuming a total power of 5.5 W. Fabricated in a 65 nm, four-metal BSI process, the imager features a 5 µm voltage-domain global shutter pixel with dual-gain capability for improved dynamic range and a read noise of 3.04 e− in global shutter and 2.15 e− in rolling shutter mode for high-gain at maximum frame rate operation. For compact camera integration and low power consumption, the sensor is designed to stream video through 16 CML data ports, each operating at 7.44 Gbps, achieving a total aggregate throughput of 119 Gbps. Additionally, the sensor supports selectable output bit depths—8-bit, 10-bit, and 12-bit—allowing frame rate optimization based on application-specific requirements.

Figure 1. Image sensor (ForzaFAST581) die photo with 268-Pin LGA Package.

Figure 9. Bottom digital block floor plan.

Figure 13. (a) High-speed signal path (from clock receiver to serialized data output), (b) high-speed 16-to-1 serializer architecture.

Figure 16. Quantum efficiency vs. wavelength (in nm).

Monday, February 16, 2026

Himax full year financial results for 2025 are out

Excerpts:

Himax’s visibility for the whole year outlook of automotive sector remains limited amid the backdrop of uncertain government policy and consumer sentiment. However, the Company expects the first quarter to be the trough of the year, with sales rebounding in the second quarter and business momentum continuing to improve into the second half, supported by lean customer inventory levels and new projects for automotive customers scheduled to MP later in the year. Continued growth in non-driver IC businesses, particularly Tcon and WiseEye AI, should provide incremental support

Himax continues to expand into areas such as ultralow power AI for endpoint devices, Front-lit LCoS microdisplay and waveguide for AR glasses, and WLO for co-packaged optics, all of them are with exciting upside potential in the next couple of years, driven by the recent breakout of AI. Himax expects these initiatives to become new meaningful growth drivers while also improving Company’s product mix and overall profitability

WiseEye is gaining strong traction in smart glasses, with a growing number of design-in engagements underway among global tech names, solution platform providers, and smart glasses specialists. A leading brand’s smart glasses are poised to enter mass production later this year, marking an important milestone for WiseEye in the smart glasses market

[... WiseEye ... incorporates Himax proprietary ultralow power AI processor, always-on CMOS image sensor, and CNN-based AI algorithm ... consumer electronics and AIoT related applications.]

Friday, February 13, 2026

CIS startup MetaSilicon raises over $40m

https://www.startupresearcher.com/news/metasilicon-secures-over-usd43-2-million-in-series-a-funding

MetaSilicon, a designer of high-dynamic CMOS image sensors, has successfully closed its A+ financing round, securing over $43.2 million. The funding, led by a consortium of prominent investors, is earmarked for accelerating research and development efforts. This strategic capital infusion will bolster the company's dual-track strategy targeting both the automotive and consumer electronics markets.

Strategic Investment and Market Confidence

The round was jointly led by Ceyuan Capital, Wuxi Industrial Investment, and the FAW Hongqi Private Equity Fund, signaling strong confidence in MetaSilicon's vision. A diverse group of new investors, including Innovation Works and CSC Financial, also participated in the financing. Existing shareholder GRC SinoGreen demonstrated continued support by increasing its investment, underscoring the company's promising trajectory.

Rapid Growth and Commercial Success

Since its inception, MetaSilicon has demonstrated remarkable growth, with its revenue soaring from just a few million yuan in 2023 to nearly $28.8 million in 2025. This financial achievement is complemented by significant operational scale, as the company has shipped over 75 million chips to date. This rapid expansion has established MetaSilicon as one of the fastest-growing image sensor design firms in the industry.

Dual-Track Market Domination

The company's success is built on a dual-track strategy that effectively serves two major technology sectors. In consumer electronics, MetaSilicon has delivered nearly 100 projects for industry giants such as Samsung, Xiaomi, and OPPO. This broad adoption by leading brands highlights the quality and competitiveness of its sensor technology in a highly demanding market.

Simultaneously, MetaSilicon has made significant inroads into the smart automotive industry, a key area for future growth. Its 1.3-megapixel and 3-megapixel automotive-grade sensors have passed rigorous validation with over 20 OEMs and Tier 1 suppliers. The company has established deep collaborations, notably with FAW Hongqi, achieving mass production for critical in-vehicle systems.

Advancing Automotive Sensor Technology

These automotive chips are already being integrated into essential applications like Advanced Driver-Assistance Systems (ADAS), in-cabin monitoring, and electronic rearview mirrors. This widespread implementation in production vehicles confirms the reliability and performance of MetaSilicon's technology. The company's ability to secure pre-installation contracts signifies its trusted position within the automotive supply chain.

Looking ahead, MetaSilicon is developing a next-generation 8-megapixel automotive CIS chip to meet the demands of advanced autonomous driving. This high-performance sensor is specifically designed for high-end ADAS, prioritizing superior night vision, high dynamic range, and anti-interference capabilities. The company plans to begin market promotion for this innovative product in 2026, reinforcing its technological leadership.

This successful A+ financing round marks a significant milestone for MetaSilicon, providing the necessary resources to fuel its next phase of innovation. According to founder and chairman Liu Canyi, the capital will be pivotal in deepening R&D investment and enhancing product value for customers. With a proven track record and a clear vision for the future, MetaSilicon is well-positioned to solidify its leadership in the competitive image sensor market.

Grass Valley needs an Engineer in The Netherlands

Grass Valley Nederland B.V.

Hardware-Sensor Engineer - Breda, Netherlands - Link

Thursday, February 12, 2026

IR sensor tech firm Senseeker acquires Axis Machine

Santa Barbara, California (February 11th, 2025) - Senseeker Corp, a leading innovator of digital infrared image sensing technology, can now respond to customer requirements more quickly and thoroughly through the acquisition of Axis Machine (Santa Barbara, California) by Senseeker Machining Company (SMC).

Senseeker Machining Company will continue to support and grow Axis Machine’s established customer base built up over 20+ years in delivering high-quality machined parts. The acquisition will enable Senseeker to further grow mechanical component lines and to reduce the lead time on machined parts used in Senseeker’s programs and portfolio of industry standard commercial cryogenic test equipment for testing infrared focal plane arrays.

SMC will continue to operate from the existing machine shop facility, located at 81 David Love Place, just a short walk from the Senseeker Corp headquarters in Santa Barbara. The SMC facility is equipped with several 3-Axis and 4-Axis CNC Machining Centers, Lathes and Multi-Axis Milling Equipment to be able to maintain a high throughput of work. A Mitutoyo DCC-CMM, optical comparator and a full range of precision inspection tools are used for quality control. SMC also runs industry standard CAD and CNC programming software.

“Bringing high-quality machining capability to Senseeker is an important step in the evolution of the company’s unique lateral business model. Senseeker’s cryogenic Sensor Test Unit product lines have grown significantly in recent years and this acquisition will help accelerate delivery times,” said Kenton Veeder, CEO of Senseeker. “Additionally, our mechanical engineering has expanded across our program portfolio and our new machining capability will help us build better mechanical systems through tight coupling between machining and engineering. We are excited to build SMC into a high-quality machining organization for existing shop customers and new sensor community customers alike.”

Monday, February 09, 2026

Paper on 3D-stacked InGaAs/InP SPAD

Proposed complementary optical gating pixel for InGaAs SPADs (a) arranged in a 9696 array (b) and its timing diagram (c).

Micrograph of the bottom tier (a) and 3D-stacked chip micrograph (b). Illustration of the indium bump bonding scheme (c).Friday, February 06, 2026

Passive SPAD simulator and dataset

Preprint: https://arxiv.org/abs/2601.12850

In a preprint titled "Accurate Simulation Pipeline for Passive Single-Photon Imaging" Suonsivu et al. write:

Single-Photon Avalanche Diodes (SPADs) are new and promising imaging sensors. These sensors are sensitive enough to detect individual photons hitting each pixel, with extreme temporal resolution and without readout noise. Thus, SPADs stand out as an optimal choice for low-light imaging. Due to the high price and limited availability of SPAD sensors, the demand for an accurate data simulation pipeline is substantial. Indeed, the scarcity of SPAD datasets hinders the development of SPAD-specific processing algorithms and impedes the training of learning-based solutions. In this paper, we present a comprehensive SPAD simulation pipeline and validate it with multiple experiments using two recent commercial SPAD sensors. Our simulator is used to generate the SPAD-MNIST, a single-photon version of the seminal MNIST dataset, to investigate the effectiveness of convolutional neural network (CNN) classifiers on reconstructed fluxes, even at extremely low light conditions, e.g., 5 mlux. We also assess the performance of classifiers exclusively trained on simulated data on real images acquired from SPAD sensors at different light conditions. The synthetic dataset encompasses different SPAD imaging modalities and is made available for download.

The dataset download link is here: https://boracchi.faculty.polimi.it/Projects/SPAD-MNIST.html

This is based on work presented at the European Conference on Computer Vision, Synthethic Data for Computer Vision Workshop in 2024

Wednesday, February 04, 2026

Samsung's US fab for iPhone CIS

Monday, February 02, 2026

Canon's weighted photon counting SPAD array

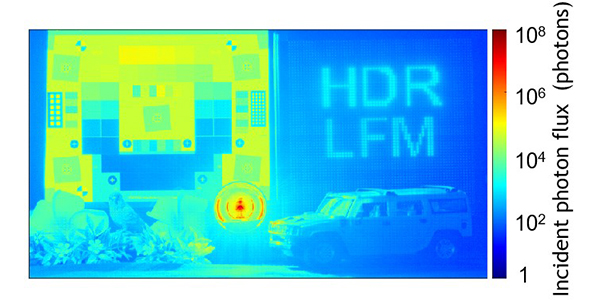

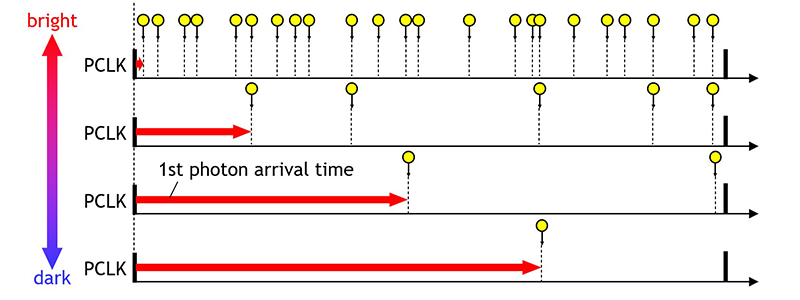

In June 2025 Canon announced an HDR SPAD sensor that performs weighted counting (as opposed to simply accumulating photon counts): https://global.canon/en/news/2025/20250612.html

Canon develops High Dynamic Range SPAD sensor with potential to detect subjects even in low-light conditions or environments with strong lighting contrasts thanks to unique technology

TOKYO, June 12, 2025—Canon Inc. announced today that it has developed a 2/3" SPAD sensor featuring approximately 2.1 megapixels and a high dynamic range of 156dB. Thanks to a unique circuit technology, it realizes high dynamic range, low power consumption, and the ability to mitigate flickering from LED lights. Canon will continue further technological development and aims to start mass production.

SPAD sensors employ a principle called photon counting, which detects each photon (light particle) entering a pixel and counts the incident number of photons. This sensor does not take in any noise during the readout process, making it possible to capture a clear image of subjects. Also, it can measure the distance to the subject at high speed with excellent timing precision.

However, due to limitations in processing speed, when the incident number of photons exceed a certain threshold level under high-illuminance conditions, conventional SPAD sensors experienced difficulties when separating individual photons to read out, which led the acquired image to white-out. In addition, such sensors consume a large amount of power as each photon counting independently consumes power.

On the other hand, Canon's newly developed SPAD sensor uses a unique technology called “weighted photon counting.” Focusing on the fact that the frequency at which photons reach the sensor correlates with illuminance, this technology measures the time it takes for the initial photon to reach the pixel within a certain time frame, then estimates the total number of photons that will arrive at the pixel over a certain time period. As a result, the image does not white-out due to a large number of photons precisely estimated while they are not being actually counted, allowing the subject to be captured clearly.

While the conventional SPAD sensor actually counts all incident photons one by one, the new method estimates the total amount of incident photons within a certain timeframe based on the time it takes for the first incident photon to arrive. As a result, the new sensor achieves a high dynamic range of 156dB, approximately five times higher than the previous sensor2. At the same time, this approach limits the power consumption per pixel by roughly 75% by reducing the frequency of photon detections. In addition, this technology also mitigates the flickering that occurs when capturing light from LEDs such as traffic lights.

Canon anticipates that this new sensor will have a wide variety of applications, such as surveillance, onboard vehicle equipment, and industrial use. For instance, it is expected to be applied to autonomous driving3 and advanced driving-assistance systems3. As autonomous driving technology advances, the demand for onboard sensors is increasing. At the same time, as many countries increasingly tighten related safety standards, there is a need for advanced sensor technology to ensure the safety of autonomous driving. However, the currently available CMOS sensors that are commonly used in vehicles are known to have several issues with visibility in environments with strong contrasts between bright and dark scenes, such as tunnel exits, or extremely low light conditions. Canon has addressed these issues by combining new features with the conventional SPAD sensors, which excel in low-light shooting.

Canon announced this new sensor technology on June 12, 2025 at the 2025 Symposium on VLSI Technology and Circuits held in Kyoto, Japan.

- While conventional SPAD sensors count all incident photons one by one, the newly developed SPAD sensor uses a unique technology called weighted photon counting that estimates the total amount of incident photons within a certain period of time based on the detection of the first incident photon. This greatly widens the number of photons that can be measured.

- This technology can also mitigate flickering when light from LEDs such as traffic lights is captured.

Weighted photon counting enables photon detection in both high and low levels of illuminance

With excellent high dynamic range performance of 156dB, a clear image is captured including bright and dark subjectsSimplified illustration of the weighted photon counting technique. The earlier the arrival of the first incident photon, the brighter the incident light.

Friday, January 30, 2026

Sony releases image stabilizer chip

Link: https://www.sony-semicon.com/en/products/lsi-ic/stabilizer.html

The Stabilizer Large-Scale Integration (LSI) CXD5254GG chip combines an image sensor and 6-axis inertial measurement unit (IMU) to perform electronic image stabilization (EIS), removing vibrations and maintaining a level horizon in the video input from the image sensor, and outputting the stabilized image. The advanced algorithm for attitude control reduces blurs caused by camera vibrations and achieves both real-time horizon stabilization and suppression of “jello effect” video distortion. The Stabilizer LSI is also equipped with Sony’s unique contrast improvement feature, the intelligent Picture Controller (iPC). Together with the stabilizing features, it enables the camera to clearly capture objects or information that could not be previously recognized due to vibrations.

The CXD5254GG creates new imaging value that conventional camera technologies cannot achieve, enabling applications across a wide range of fields including broadcasting, sports entertainment, security, and robotics. In addition to the CXD5254GG itself, a choice of compact camera modules combining the IMX577 sensor and lens is also available for broadcasting/video production applications, meeting a wide range of user needs.

The product performs a wide range of signal processing including high-precision blur correction via EIS, horizon maintenance, suppression of the jello effect, and lens distortion correction. We also provide established stabilizer sample parameters, derived from a variety of actual applications including onboard cameras, dashboard cameras, wearable devices, first-person view (FPV) drones, remote-controlled (RC) cars, and fixed-point cameras, backed by Sony’s many years of expertise and know-how. These sample parameter configurations can be optimized for specific applications to maximize the potential of the CXD5254GG’s stabilizing performance.

Wednesday, January 28, 2026

EETimes Prophesee article

Monday, January 26, 2026

Sony's global shutter image sensor in JSSC

In a recent paper titled "A 5.94-μm Pixel-Pitch 25.2-Mpixel 120-Frames/s Full-Frame Global Shutter CMOS Image Sensor With Pixel-Parallel 14-bit ADC", Sakakibara et al. from Sony Semiconductor Solutions (Japan) write:

We present a 25.2-Mpixel, 120-frames/s full-frame global shutter CMOS image sensor (CIS) featuring pixel-parallel analog-to-digital converters (ADCs). The sensor addresses the limitations of conventional rolling shutters (RSs)—including motion distortion, flicker artifacts, and flash banding—while maintaining image quality suitable for professional and advanced amateur photography. A stacked architecture with 3- μ m-pitch Cu–Cu hybrid bonding enables more than 50 million direct connections between the pixel array and the ADC circuits. The pixel-parallel single-slope ADCs operate with a comparator current of 25 nA and use a positive-feedback (PFB) scheme with noise-bandwidth control using an additional 11.4-fF capacitor, achieving 2.66 e−rms ( 166.8 μVrms ) random noise (RN) at 0-dB gain with an REF slope of 2161 V/s. The 5.94- μ m pixel pitch accommodates 30-bit latches designed under SRAM rules in a 40-nm CMOS process. Noise analysis reveals that in subthreshold operation, the dominant noise contributors are the comparator current, REF slope, and second-stage load capacitance. The sensor delivers 14-bit resolution, a 75.5-dB dynamic range (DR), and 120-frames/s operation at a power consumption of 1545 mW. A figure of merit of 0.083 e−rms⋅ pJ/step is comparable to state-of-the-art RS sensors. These results demonstrate that pixel-parallel ADC technology can be scaled to tens of megapixels while preserving high image quality and energy efficiency, enabling motion-artifact-free imaging in battery-powered consumer cameras.

Full paper link [behind paywall]: https://ieeexplore.ieee.org/document/11219086

Sunday, January 25, 2026

Conference List - July 2026

2nd International Conference on Optical Imaging and Detection Technology (OIDT 2026) - 3-5 July 2026 - Yulin, China - Website

New Developments in Photodetection - 6-10 July 2026 - Troyes, France - Website

11th International Smart Sensor Technology Exhibition - 8-10 July 2026 - Goyang, South Korea - Website

Tenth International Conference on Imaging, Signal Processing and Communications - 11-13 July 2026 - Kobe, Japan - Website

IEEE International Conference on Flexible Printable Sensors and Systems - 12-15 July 2026 - Atlanta, Georgia, USA - Website

Optica Sensing Congress - 12-17 July 2026 - Maastricht, Netherlands - Website

IEEE Sensors Applications Symposium - 15-17 July 2026 - Vitoria, Brazil - Website

American Association of Physicists in Medicine 67th Annual Meeting and Exhibition - 19-22 July 2026 - Vancouver, BC, Canada - Website

IEEE Nuclear & Space Radiation Effects Conference (NSREC) - 20-24 July 2026 - San Juan, Puerto Rico, USA - Website

34th International Workshop on Vertex Detectors - 20-24 July 2026 - Stoos, Switzerland - Website

If you know about additional local conferences, please add them as comments.

Return to Conference List index

Thursday, January 22, 2026

Synthetic aperture imager

Link: scitechdaily.com/this-breakthrough-image-sensor-lets-scientists-see-tiny-details-from-far-away/

Open-access paper: Multiscale aperture synthesis imager https://www.nature.com/articles/s41467-025-65661-8

A new lens-free imaging system uses software to see finer details from farther away than optical systems ever could before.

Imaging technology has reshaped how scientists explore the universe – from charting distant galaxies using radio telescope arrays to revealing tiny structures inside living cells. Despite this progress, one major limitation has remained unresolved. Capturing images that are both highly detailed and wide in scope at optical wavelengths has required bulky lenses and extremely precise physical alignment, making many applications difficult or impractical.

Researchers at the University of Connecticut may have found a way around this obstacle. A new study led by Guoan Zheng, a biomedical engineering professor and director of the UConn Center for Biomedical and Bioengineering Innovation (CBBI), along with his team at the University of Connecticut College of Engineering, was published in Nature Communications. The work introduces a new imaging strategy that could significantly expand what optical systems can do in scientific research, medicine, and industrial settings.

Why Synthetic Aperture Imaging Breaks Down at Visible Light

“At the heart of this breakthrough is a longstanding technical problem,” said Zheng. “Synthetic aperture imaging – the method that allowed the Event Horizon Telescope to image a black hole – works by coherently combining measurements from multiple separated sensors to simulate a much larger imaging aperture.”

This approach works well in radio astronomy because radio waves have long wavelengths, which makes precise coordination between sensors achievable. Visible light operates on a much smaller scale. At those wavelengths, the physical accuracy needed to keep multiple sensors synchronized becomes extremely difficult to maintain, placing strict limits on traditional optical synthetic aperture systems.

Letting Software Do the Synchronizing

The Multiscale Aperture Synthesis Imager (MASI) addresses this challenge in a fundamentally different way. Instead of requiring sensors to remain perfectly synchronized during measurement, MASI allows each optical sensor to collect light on its own. Computational algorithms are then used to align and synchronize the data after it has been captured.

Zheng describes the concept as similar to several photographers observing the same scene. Rather than taking standard photographs, each one records raw information about the behavior of light waves. Software later combines these independent measurements into a single image with exceptionally high detail.

This computational approach to phase synchronization removes the need for rigid interferometric setups, which have historically prevented optical synthetic aperture imaging from being widely used in real-world applications.

How MASI Captures and Rebuilds Light

MASI differs from conventional optical systems in two major ways. First, it does not rely on lenses to focus light. Instead, it uses an array of coded sensors placed at different locations within a diffraction plane. Each sensor records diffraction patterns, which describe how light waves spread after interacting with an object. These patterns contain both amplitude and phase information that can later be recovered using computational methods.

After the complex wavefield from each sensor is reconstructed, the system digitally extends the data and mathematically propagates the wavefields back to the object plane. A computational phase synchronization process then adjusts the relative phase differences between sensors. This iterative process increases coherence and concentrates energy in the combined image.

This software-based optimization is the central advance. By aligning data computationally rather than physically, MASI overcomes the diffraction limit and other restrictions that have traditionally governed optical imaging.

A Virtual Aperture With Fine Detail

The final result is a virtual synthetic aperture that is larger than any single sensor. This allows the system to achieve sub-micron resolution while still covering a wide field of view, all without using lenses.

Traditional lenses used in microscopes, cameras, and telescopes force engineers to balance resolution against working distance. To see finer details, lenses usually must be placed very close to the object, sometimes just millimeters away. That requirement can limit access, reduce flexibility, or make certain imaging tasks invasive.

MASI removes this constraint by capturing diffraction patterns from distances measured in centimeters and reconstructing images with sub-micron detail. Zheng compares this to being able to examine the fine ridges of a human hair from across a desk rather than holding it just inches from your eye.

Scalable Applications Across Many Fields

“The potential applications for MASI span multiple fields, from forensic science and medical diagnostics to industrial inspection and remote sensing,” said Zheng, “But what’s most exciting is the scalability – unlike traditional optics that become exponentially more complex as they grow, our system scales linearly, potentially enabling large arrays for applications we haven’t even imagined yet.”

The Multiscale Aperture Synthesis Imager represents a shift in how optical imaging systems can be designed. By separating data collection from synchronization and replacing bulky optical components with software-controlled sensor arrays, MASI shows how computation can overcome long-standing physical limits. The approach opens the door to imaging systems that are highly detailed, adaptable, and capable of scaling to sizes that were previously out of reach.

Tuesday, January 20, 2026

Eric Fossum receives 2026 Draper Prize for Engineering

Link: https://home.dartmouth.edu/news/2026/01/eric-fossum-awarded-draper-prize-engineering

Eric R. Fossum, the John H. Krehbiel Sr. Professor for Emerging Technologies, has been awarded the 2026 Charles Stark Draper Prize for Engineering, which is granted every two years by the National Academy of Engineering and is one of the world’s preeminent honors for engineering achievement.

The NAE recognized Fossum “for innovation, development, and commercialization of the complementary metal-oxide semiconductor active pixel image sensor,” an invention that remains the core technology behind roughly 7 billion cameras produced each year.

“Eric Fossum is a pioneering semiconductor device physicist and engineer whose invention of the CMOS active pixel image sensor, or ‘camera on a chip,’ has transformed imaging across everyday life, industry, and scientific discovery,” the NAE said in announcing the prize, which includes a $500,000 cash award.

The honor is the latest in a string of accolades for Fossum, who in addition to his role as a professor at Thayer School of Engineering also serves as vice provost for entrepreneurship and technology transfer and directs the PhD Innovation Program.

His other honors include the Queen Elizabeth Prize for Engineering, the National Medal for Technology and Innovation awarded at a White House ceremony last year, and a Technical Emmy Award recognizing the transformative impact of Fossum’s invention.

Today, CMOS image sensors, which were intended to make digital cameras for space faster, better, and cheaper, are behind billions of captures in a vast variety of settings—selfies, high-definition videos, dental X-rays, and space images.

“Eric Fossum’s inventions have revolutionized digital imaging across industries,” says President Sian Leah Beilock. “His work is a prime example of how the applied research our faculty foster and undertake can drive innovation and improve our world.”

Research for NASA

Tasked with creating smaller cameras for NASA spacecraft that would use less energy, Fossum led the team that invented and developed the CMOS image sensor technology at the Jet Propulsion Laboratory at the California Institute of Technology in the 1990s. The CMOS image sensor integrated all the essential camera functions on a single piece of silicon—each chip contained arrays of light-sensitive pixels, each with its own amplifier.

Fossum recalls the moment when their first image sensor worked flawlessly in testing. It was a eureka moment, but only in hindsight. His initial reaction was tempered by caution. “It seemed so straightforward that I figured others must have tried this before, and there must be a fatal flaw somewhere. So, it was exhilarating to see that it was working,” he says.

The CMOS sensor was commercialized through Photobit, the company he co-founded and helped lead until its acquisition by Micron.

As the CMOS sensor grew in sophistication, so too did its impact, finding applications in both predictable and surprising ways, such as swallowable pill cameras that can take images inside the body and the explosion of smartphone cameras, which forever changed how we capture and share our lives.

“The impact it has had on social justice has been huge, which I did not anticipate at all, and is truly gratifying. It protects people that might otherwise be powerless, and those with power from false accusations,” Fossum says.

Fossum, a Connecticut native, received a bachelor of science degree in physics and engineering from Trinity College, and a PhD in engineering and applied science from Yale in 1984. Prior to his work at the Jet Propulsion Lab, he was a faculty member at Columbia University. After leading several startups, consulting, and co-founding the International Image Sensor Society, he joined Dartmouth in 2010.

Fossum’s many other honors include the NASA Exceptional Achievement Medal, the IEEE Jun-ichi Nishizawa Medal, and induction into the U.S. Space Foundation Technology Hall of Fame in 1999 and the National Inventors Hall of Fame in 2011. He also served as CEO of Siimpel, developing MEMS devices for autofocus in smartphone camera modules, and worked as a consultant for Samsung on time-of-flight sensor development. He is a member of the National Academy of Engineering and a fellow of the National Academy of Inventors, the Institute of Electrical and Electronics Engineers, and Optica.

Counting photons: The future of imaging

Fossum continues to push the boundaries of imaging. His more recent invention, the quanta image sensor, was developed at Dartmouth and enables high-resolution imaging in extremely low-light conditions.

“We’re working on sensors that can count photons, one at a time,” he says. “Imagine being able to take a photo in almost complete darkness or measuring extremely faint signals in biology. It’s like turning the lights on in a place that was previously invisible to us.”

Fossum and two of his former Dartmouth students co-founded Gigajot to commercialize the technology.

“Eric’s achievements are not the result of a single breakthrough, but of sustained curiosity and a focus on real-world impact,” says Douglas Van Citters ’99, Thayer ’03, ’06, interim Thayer dean. “To this day, he brings exceptional dedication to teaching and research, along with a passion for entrepreneurship that permeates Dartmouth, especially Thayer. And that spirit has inspired generations of engineers at Dartmouth who, like Eric, are committed to improving lives through the technologies they create.”

When asked about where he sees the field of imaging in the next decade, Fossum imagines a world where great images can be captured using a handful of photons and where computational imaging allows humans to see the world in ways eyes themselves never could.

“The ability to capture images in low light will continue to improve,” he predicts. “And we’re likely to see a proliferation of augmented reality technologies that will change the way we experience the world around us.”

In his mind, the grand challenge ahead is miniaturization—creating sensors with pixels so tiny that they become smaller than the wavelength of light itself. With this breakthrough, imaging technology could scale to the point where a single chip contains billions of pixels, opening new possibilities for everything from medical diagnostics to space exploration.

Along with his continuing work on sensors, Fossum draws from his extensive experience in innovation and entrepreneurship in his role as vice provost and in overseeing the PhD Innovation Program.

He says that the program trains students not just to think creatively but to apply their research in ways that have a meaningful impact.

“It is just so much more satisfying to make a real impact with the work that you do,” he says.

The awards ceremony is scheduled for Feb. 18 in Washington, D.C. As he did with the Queen Elizabeth prize, Fossum plans to donate the majority of the Draper Prize funds to STEM-related charities.

Monday, January 19, 2026

Mythic image sensor

Link: https://www.eetimes.com/mythic-rises-from-the-ashes-with-125-million-funding-round/

Mythic Rises from the Ashes with $125 Million Funding Round

Excerpt:

A separate product family, dubbed “Starlight,” will use a Mythic compute chiplet hybrid-bonded under a vision sensor’s photodiode array. The two dies will use less than 1 W between them.

Ozcelik said he noticed a gap in the market for this type of device while previously working at OnSemiconductor.

“One of the biggest challenges for image sensors is low light performance,” he said. “Dynamic range is another major problem, especially in mission critical applications.”

A Mythic AI accelerator could run a neural network to improve low-light performance and dynamic range directly next to the sensor. Image sensors made for applications like cellphones are very small (one-third of an inch), and performance suffers as they get smaller, Ozcelik said. Mythic has a unique opportunity here as its technology is compact, and crucially, it uses very little power, according to Ozcelik (photodiode arrays are extremely thermally sensitive, meaning even a small DSP couldn’t be placed directly under the photodiode array).

Mythic is going to build this sensor and AI accelerator combination itself, and both the accelerator chiplet and the image sensor product will tape out this year, Ozcelik said.

Overall, Ozcelik is pragmatic about the scale of the challenges ahead, particularly given the company’s move into the data center where it will compete with Nvidia.

“[Our advantage] has to be incredibly material,” he said. “It has to be at least one hundred times, hopefully more.”

Saturday, January 17, 2026

Voyant releases solid-state FMCW LiDAR

Press release: https://voyantphotonics.com/news/1075/

New York, NY – December 17, 2025 – Voyant Photonics, the leader in chip-scale frequency-modulated continuous-wave (FMCW) LiDAR, today announced its Helium™ Platform of fully solid-state LiDAR sensors and modules. The solution is built on a silicon photonics chip, enabling a breakthrough architecture designed to deliver unprecedented reliability, integration, and performance for industrial automation, robotics, and mobile autonomy.

Leveraging Voyant’s proprietary Photonic Integrated Circuit (PIC), Helium offers camera-like simplicity and unmatched flexibility. Helium uses a dense two-dimensional photonic focal plane array with fully integrated 2D on-chip beam steering — eliminating all unreliable scanning methods: MEMS, mirrors, and resulting in no moving parts. The FMCW LiDAR chip leverages a two-dimensional array of surface emitters to create a fully solid-state LiDAR in an ultra-compact, rugged design. Helium also supports multi-sensor configurations, combining for instance a wide-FoV short-range and narrow-FoV long-range sensing in one system — delivering the most versatile and cost-effective LiDAR solution for advanced perception applications.

Helium first prototype release will be demonstrated at Voyant’s booth (LVCC, West Hall, Booth #4875) at CES 2026 in Las Vegas, January 6-9, marking a major milestone in advancing silicon-photonics LiDAR from R&D into high-volume systems that are proliferating Physical AI.

“Helium represents the next step in our mission to deliver the most affordable high performance LiDAR sensor ever,” said Voyant CEO Clément Nouvel. “Industrial and consumer markets demand sensors that are small, cost efficient, and highly reliable. Helium provides all of that while delivering performance that unlocks new classes for intelligent machines.”

A Flexible Platform to Move Solid-State LiDAR Forward

Helium extends the technology foundation proven in Voyant’s Carbon™ product line, bringing full two-dimensional beam steering to a silicon-photonics platform for the first time. The result is a compact, high-precision 4D sensor that meets the highest industry standards for safety and reliability.

Key advantages include:

- True solid-state — no MEMS, polygon scanners, or rotating assemblies

- High-resolution FPA architecture spanning from 12,000 pixels to over 100,000 pixels

- Long-range FMCW performance, per-pixel radial velocity

- Software-defined LiDAR (SDL) enabling adaptive scan patterns and region of interest

- Ultra Compact Size -as small as a matchbox (<150 g mass and <50 cm³ volume), ideal for drones, mobile robots, and compact industrial systems

Field of view and range can be tailored with different lenses, and the platform scales from core module options to fully enclosed sensor. Helium is built on a 2D array of surface-emitting photonic antennas combined with a fixed lens and integrated electronics, forming a rugged module ideal for embedded perception.

With no moving parts and monolithic photonic integration, Helium offers an estimated 20× improvement in MTBF over legacy ToF LiDAR architectures —a critical reliability requirement for high-duty-cycle industrial fleets.

Engineered for Scalable Manufacturing

As with the Carbon family, Helium is built entirely on Voyant’s leading proprietary silicon-photonics platform, enabling new levels of performance and integration. This deep integration eliminates the unreliable optical alignments that limit traditional TOF LiDAR manufacturability. Helium leverages the same mature photonics foundry ecosystem as the optical datacom industry — allowing Voyant to scale production toward semiconductor-class cost structures.

From Carbon to Helium —Voyant Advances a Modular LiDAR Platform for Broader Adoption

Voyant established the company’s leadership in compact, cost-optimized FMCW sensing for compute-constrained platforms with its first-generation Carbon™ family, extended last week with the new Carbon 32 and Carbon 64 variants. Helium builds directly on these advances, expanding the architecture from 1D to 2D on-chip beam steering, with higher resolution and a fully solid-state scan engine. Voyant now enables OEMs to integrate its sensing technology directly into their machines by offering module-only access along with full design-in support. This allows partners to build customized, high-performance sensor solutions tailored to their exact requirements.

Helium sensors and modules will be available with multiple resolution and range configurations, supporting a wide choice of field-of-view options—from ultra-wide coverage approaching 180° down to narrower, long-range targeting optics. These modular variants enable OEMs and developers to select and integrate lenses that best suit their application, allowing LiDAR architectures to be tailored for mobile robots, material-handling systems, smart infrastructure, and emerging edge-compute platforms.

Wednesday, January 14, 2026

Leica image sensor development?

There are some recent news reports that Leica is developing its own image sensor.

Petapixel: https://petapixel.com/2026/01/02/leica-is-developing-its-own-image-sensors-again/

Lecia rumors: https://leicarumors.com/2026/01/01/leica-is-developing-its-own-camera-sensor-again-most-likely-for-the-leica-m12-camera.aspx/

Excerpt:

In a recent podcast, Dr. Andreas Kaufmann (Chairman of the Supervisory Board and majority shareholder of Leica Camera AG) confirmed that Leica is again developing their own sensor, most likely for the next Leica M12 camera (Google translation):

Furthermore, as has already become somewhat known, we are also developing our own sensor again. […] Up until the M10, we had a sensor of European origin. It was manufactured by AMS in Graz, or rather, developed by their Dutch development office. And the foundry itself was in Grenoble, a French company. And then there was the transition with the M11 to Sony sensors. It’s no secret that they’re in there. At the same time, we started developing our own sensor again, in a more advanced version. I think we’ve made significant progress with that. We can’t say more at the moment.

Monday, January 05, 2026

Eric Fossum receives 2026 IEEE Nishizawa Medal

Thursday, January 01, 2026

Conference List - June 2026

SPIE Photonics for Quantum - 8-11 June 2026 - Waterloo, Ontario, Canada - Website

AutoSens USA 2026 - 9-11 June 2026 - Detroit, Michigan, USA - Website

Sensor+Test - 9-11 June 2026 - Nuremberg, Germany - Website

Smart Sensing - 10-12 June 2026 - Tokyo, Japan - Website

IEEE/JSAP Symposium on VLSI Technology and Circuits - 14-18 June 2026 - Honolulu, Hawaii, USA - Website

International Conference on Sensors and Sensing Technology (ICCST2026)- 15-17 June 2026 - Florence, Italy - Website

International Conference on IC Design and Technology (ICICDT) - 22-24 June 2026 - Dresden, Germany - Website

Automate 2026 - 22-25 June 2026 - Chicago, Illinois, USA - Website

27th International Workshop on Radiation Imaging Detectors - 28 June-2 July 2026 - Ghent, Belgium - Website

If you know about additional local conferences, please add them as comments.

Return to Conference List index