Meta (Facebook) publishes a research post "AI that understands speech by looking as well as hearing:"

"People use AI for a wide range of speech recognition and understanding tasks, from enabling smart speakers to developing tools for people who are hard of hearing or who have speech impairments. But oftentimes these speech understanding systems don’t work well in the everyday situations when we need them most: Where multiple people are speaking simultaneously or when there’s lots of background noise. Even sophisticated noise-suppression techniques are often no match for, say, the sound of the ocean during a family beach trip or the background chatter of a bustling street market.

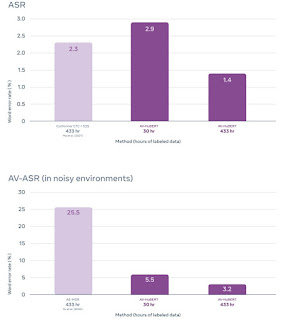

To help us build these more versatile and robust speech recognition tools, we are announcing Audio-Visual Hidden Unit BERT (AV-HuBERT), a state-of-the-art self-supervised framework for understanding speech that learns by both seeing and hearing people speak. It is the first system to jointly model speech and lip movements from unlabeled data — raw video that has not already been transcribed. Using the same amount of transcriptions, AV-HuBERT is 75 percent more accurate than the best audio-visual speech recognition systems (which use both sound and images of the speaker to understand what the person is saying)."

Perhaps this can be use for people who forgets to put their microphone ON during online Teams meeting

ReplyDelete... and for others who forget to put their microphone OFF when they abuse other people.

Deletewow Albert - where does that come from?

Delete